This isn’t a gloat post. In fact, I was completely oblivious to this massive outage until I tried to check my bank balance and it wouldn’t log in.

Apparently Visa Paywave, banks, some TV networks, EFTPOS, etc. have gone down. Flights have had to be cancelled as some airlines systems have also gone down. Gas stations and public transport systems inoperable. As well as numerous Windows systems and Microsoft services affected. (At least according to one of my local MSMs.)

Seems insane to me that one company’s messed up update could cause so much global disruption and so many systems gone down :/ This is exactly why centralisation of services and large corporations gobbling up smaller companies and becoming behemoth services is so dangerous.

What?! No, it must be Kaspersky!

/s

Would you really be paying for Crowdstrike for use at home?

From my understanding, they have some ring 0 thing that fucked up. Could that not in theory happen on our beloved Linux systems? Or does the kernel generally not give that option?

My brother in Christ, I’ve borked Linux systems with a misplaced text file =D

So have I, multiple times, yeah

♬This is me ♬ - https://fosstodon.org/@vanillaos/112749170589287081

deleted by creator

I love how everyone understands the issue wrong. It’s not about being on Windows or Linux. It’s about the ecosystem that is common place and people are used to on Windows or Linux. On windows it’s accepted that every stupid anticheat can drop its filthy paws into ring 0 and normies don’t mind. Linux has a fostered a less clueless community, but ultimately it’s a reminder to keep vigilant and strive for pure and well documented open source with the correct permissions.

BSODs won’t come from userspace software

While that is true, it makes sense for antivirus/edr software to run in kernelspace. This is a fuck-up of a giant company that sells very expensive software. I wholeheartedly agree with your sentiment, but I mostly see this as a cautionary tale against putting excessive trust and power in the hands of one organization/company.

Imagine if this was actually malicious instead of the product of incompetence, and the update instead ran ransomware.

If it was malicious it wouldn’t have had the reach a trusted platform would. That is what made the xz exploit so scary was the reach and the malicious attempt.

I like open source software but that’s one big benefit of proprietary software. Not all proprietary software is bad. We should recognize the ones doing their best to avoid anti consumer practices and genuinely try to serve their customers needs to the best of their abilities.

Crowdstrike does have linux and mac version. Not sure who runs it

That’s precisely why I didn’t blame windows in my post, but the windows-consumer mentality of “yeah install with privileges, shove genshin impact into ring 0 why not”

Linux can have the same issue. We have to keep the culture on our side here vigilant and pure near the kernel.

I deployed it for my last employer on our linux environment. My buddies who still work there said Linux was fine while they had to help the windows Admins fix their hosts.

Me too. I’m also grateful I still accept cash.

after reading all the comments I still have no idea what the hell crowdstrike is

Seems to be some sort of kernel-embedded threat detection system. Which is why it was able to easily fuck the OS. It was running in the most trusted space.

AV, EDP they offer other solutions as well. I think their main selling point is tamper-proof protection as well.

Company offering new-age antivirus solutions, which is to say that instead of being mostly signature-based, it tries to look at application behavior instead. If Word was exploited because some user opened not_a_virus_please_open.docx from their spam folder, Word might be exploited and end up running some malware that tries to encrypt the entire drive. It’s supposed to sniff out that 1. Word normally opens and saves like one document at a time and 2. some unknown program is being overly active. And so it should stop that and ring some very loud alarm bells at the IT department.

Basically they doubled down on the heuristics-based detection and by that, they claim to be able to recognize and stop all kinds of new malware that they haven’t seen yet. My experience is that they’re always the outlier on the top-end of false positives in business AV tests (eg AV-Comparatives Q2 2024) and their advantage has mostly disappeared since every AV has implemented that kind of behavior-based detection nowadays.

I wanted to share the article with friends and copy a part of the text I wanted to draw attention to but the asshole site has selection disabled. Now I will not do that and timesnownews can go fuck themselves

It is annoying. Some possible solutions:

On desktop: Using Shift + ALT you often can overrule this and select text anyway.

On mobile: Using the reader mode or the Print preview often works. It does for me on this website.

The firefox reader mode button doesn’t show up for me on that site. I wonder if its just a poor site or if they are intentionally trying to break it.

Could be both. You can enforce it: https://addons.mozilla.org/en-US/firefox/addon/activate-reader-view/

heres the entire article

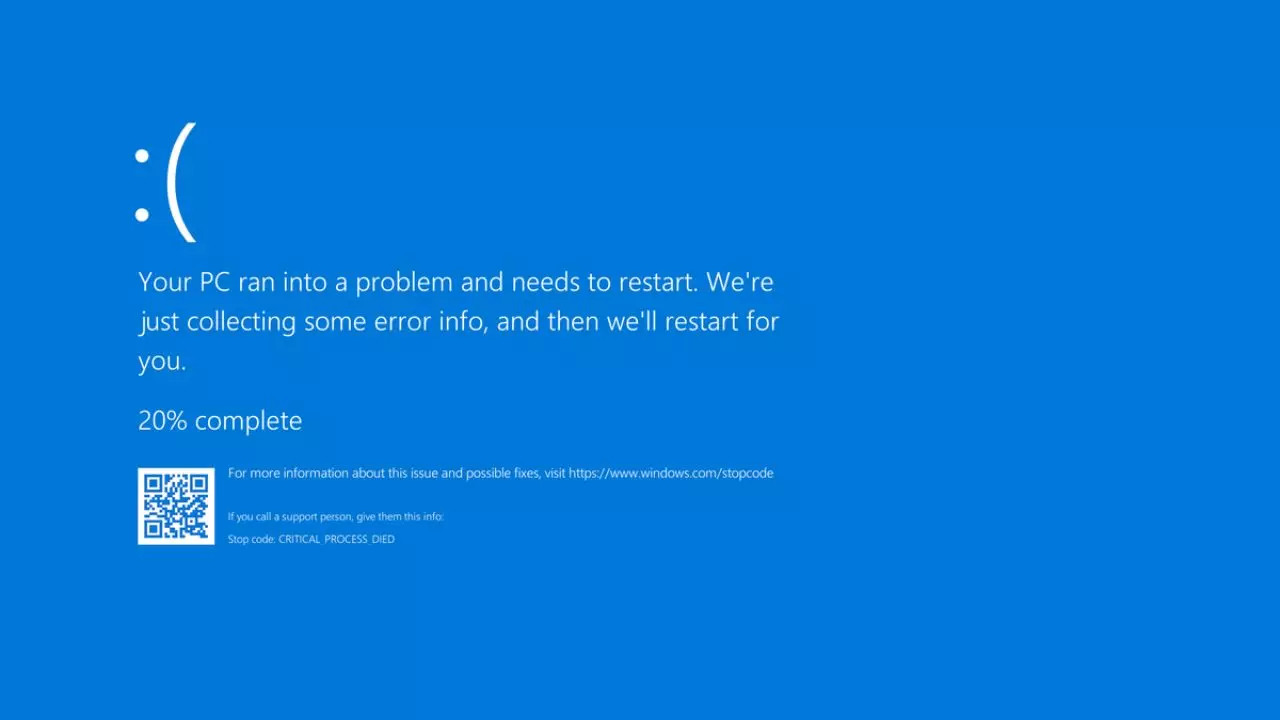

Latest Crowdstrike Update Issue: Many Windows users are experiencing Blue Screen of Death (BSOD) errors due to a recent CrowdStrike update. The issue affects various sensor versions, and CrowdStrike has acknowledged the problem and is investigating the cause, as stated in a pinned message on the company’s forum.

Who Have Been Affected

Australian banks, airlines, and TV broadcasters first reported the issue, which quickly spread to Europe as businesses began their workday. UK broadcaster Sky News couldn’t air its morning news bulletins, while Ryanair experienced IT issues affecting flight departures. In the US, the Federal Aviation Administration grounded all Delta, United, and American Airlines flights due to communication problems, and Berlin airport warned of travel delays from technical issues.

In India too, numerous IT organisations were reporting in issues with company-wide. Akasa Airlines and Spicejet experienced technical issues affecting online services. Akasa Airlines’ booking and check-in systems were down at Mumbai and Delhi airports due to service provider infrastructure issues, prompting manual check-in and boarding. Passengers were advised to arrive early, and the airline assured swift resolution. Spicejet also faced problems updating flight disruptions, actively working to fix the issue. Both airlines apologized for the inconvenience caused and promised updates as soon as the problems were resolved.

Crowdstrike’s Response

CrowdStrike acknowledged the problem, linked to their Falcon sensor, and reverted the faulty update. However, affected machines still require manual intervention. IT admins are resorting to booting into safe mode and deleting specific system files, a cumbersome process for cloud-based servers and remote laptops. Reports from IT professionals on Reddit highlight the severity, with entire companies offline and many devices stuck in boot loops. The outage underscores the vulnerability of interconnected systems and the critical need for robust cybersecurity solutions. IT teams worldwide face a long and challenging day to resolve the issues and restore normal operations.

What to Expect:-A Technical Alert (TA) detailing the problem and potential workarounds is expected to be published shortly by CrowdStrike.

-The forum thread will remain pinned to provide users with easy access to updates and information.What Users Should Do:

-Hold off on troubleshooting: Avoid attempting to fix the issue yourself until the official Technical Alert is released.

-Monitor the pinned thread: This thread will be updated with the latest information, including the TA and any temporary solutions.

-Be patient: Resolving software conflicts can take time. CrowdStrike is working on a solution, and updates will be posted as soon as they become available.In an automated reply from Crowdstrike, the company had stated: CrowdStrike is aware of reports of crashes on Windows hosts related to the Falcon Sensor. Symptoms include hosts experiencing a blue screen error related to the Falcon Sensor. The course of current action will be - our Engineering teams are actively working to resolve this issue and there is no need to open a support ticket. Status updates will be posted as we have more information to share, including when the issue is resolved.

For Users Experiencing BSODs:

If you’re encountering BSOD errors after a recent CrowdStrike update, you’re not alone. This appears to be a widespread issue. The upcoming Technical Alert will likely provide specific details on affected CrowdStrike sensor versions and potential workarounds while a permanent fix is developed.

If you have urgent questions or concerns, consider contacting CrowdStrike support directly.If you have urgent questions or concerns, consider contacting CrowdStrike support directly.

Something tells me that isn’t going to provide the comfort it was meant to.

I work in hospitality and our systems are completely down. No POS, no card processing, no reservations, we’re completely f’ked.

Our only saving grace is the fact that we are in a remote location and we have power outages frequently. So operating without a POS is semi-normal for us.

I’ve worked with POS systems my whole career and I still can’t help think Piece Of Shit whenever I see it

A couple of days ago a Windows 2016 server started a license strike in my farm … Coincidence?

Unless your server was running Crowdstrike and also hosted in a time machine, yes it is.

No and yes. I was joking…

Yes.

Is there an easy way to silence every fuckdamn sanctimonious linux cultist from my lemmy experience?

Secondly, this update fucked linux just as bad as windows, but keep huffing your own farts. You seem to like it.

I’d unsubscribe from [email protected] for a start.

I’m pretty sure this update didn’t get pushed to linux endpoints, but sure, linux machines running the CrowdStrike driver are probably vulnerable to panicking on malformed config files. There are a lot of weirdos claiming this is a uniquely Windows issue.

Thanks for the tip, so glad Lemmy makes it easy to block communities.

Also: It seems everyone is claiming it didn’t affect Linux but as part of our corporate cleanup yesterday, I had 8 linux boxes I needed to drive to the office to throw a head on and reset their iDrac so sure maybe they all just happened to fail at the same time but in my 2 years on this site we’ve never had more than 1 down at a time ever, and never for the same reason. I’m not the tech head of the site by any means and it certainly could be unrelated, but people with significantly greater experience than me in my org chalked this up to Crowdstrike.

Hi there! Looks like you linked to a Lemmy community using a URL instead of its name, which doesn’t work well for people on different instances. Try fixing it like this: [email protected]

username… checks out?

Oh you really have no fucking clue. It’s medical and no treatment has worked for more than a few weeks. it’s only a matter of time before I am banned. Now imagine living with that for 4+ decades and being the butt of every thread’s joke.

A real shame that can’t be considered medical discrimination.

That sounds exhausting. I hope you find peace, one day.

Microsoft should test all its products on its own computers, not on ours. Made an update, tested it and only then posted it online.

Microsoft has nothing to do with this. This is entirely on Crowdstrike.

I’ve just spent the past 6 hours booting into safe mode and deleting crowd strike files on servers.

Feel you there. 4 hours here. All of them cloud instances whereby getting acces to the actual console isn’t as easy as it should be, and trying to hit F8 to get the menu to get into safe mode can take a very long time.

Ha! Yes. Same issue. Clicking Reset in vSphere and then quickly switching tabs to hold down F8 has been a ball ache to say the least!

What I usually do is set next boot to BIOS so I have time to get into the console and do whatever.

Also instead of using a browser, I prefer to connect vmware Workstation to vCenter so all the consoles insta open in their own tabs in the workspace.

Just go into settings and add a boot delay, then set it back when you’re done.

Can’t you automate it?

Since it has to happen in windows safe mode it seems to be very hard to automate the process. I haven’t seen a solution yet.

Sadly not. Windows doesn’t boot. You can boot it into safe mode with networking, at which point maybe with anaible we could login to delete the file but since it’s still manual work to get windows into safe mode there’s not much point

It is theoretically automatable, but on bare metal it requires having hardware that’s not normally just sitting in every data centre, so it would still require someone to go and plug something into each machine.

On VMs it’s more feasible, but on those VMs most people are probably just mounting the disk images and deleting the bad file to begin with.

I guess it depends on numbers too. We had 200 to work on. If you’re talking hundreds more than looking at automation would be a better solution. In our scenario it was just easier to throw engineers at it. I honestly thought at first this was my weekend gone but we got through them easily in the end.

The real problem with VM setups is that the host system might have crashed too

Me too. Additionally, I use guix so if a system update ever broke my machine I can just rollback to a prior system version (either via the command line or grub menu).

That’s assuming grub doesn’t get broken in the update…

True, then I’d be screwed. But, because my system config is declared in a single file (plus a file for channels) i could re-install my system and be back in business relatively quickly. There’s also guix home but I haven’t had a chance to try that.

I would definitely recommend using guix home because having a seperate config for you more user facing stuff is so convenient (plus no need for root access to install a package declaratively) (side note take this with a grain of salt because I don’t use gnu guix I use nixos)

This is exactly why centralisation of services and large corporations gobbling up smaller companies and becoming behemoth services is so dangerous.

Its true, but otherside of same coin is that with too much solo implementation you lose benefits of economy of scale.

But indeed the world seems like a village today.

you lose benefits of economy of scale.

I think you mean - the shareholders enjoy the profits of scale.

When a company scales up, prices are rarely reduced. Users do get increased community support through common experiences especially when official channels are congested through events like today, but that’s about the only benefit the consumer sees.

That’s hell of a strike to the crowd

Crowdstrikeout

Crowd II: The Struckening