Does wine run on wayland?

Edit, had to look up wth HDR is. Seems like a marketing gimmick.

Anti Commercial AI thingy

Inserted with a keystroke running this script on linux with X11

#!/usr/bin/env nix-shell #!nix-shell -i bash --packages xautomation xclip sleep 0.2 (echo '::: spoiler Anti Commercial AI thingy [CC BY-NC-SA 4.0](https://creativecommons.org/licenses/by-nc-sa/4.0/) Inserted with a keystroke running this script on linux with X11 ```bash' cat "$0" echo '``` :::') | xclip -selection clipboard xte "keydown Control_L" "key V" "keyup Control_L"It isn’t, it’s just that marketing is really bad at displaying what HDR is about.

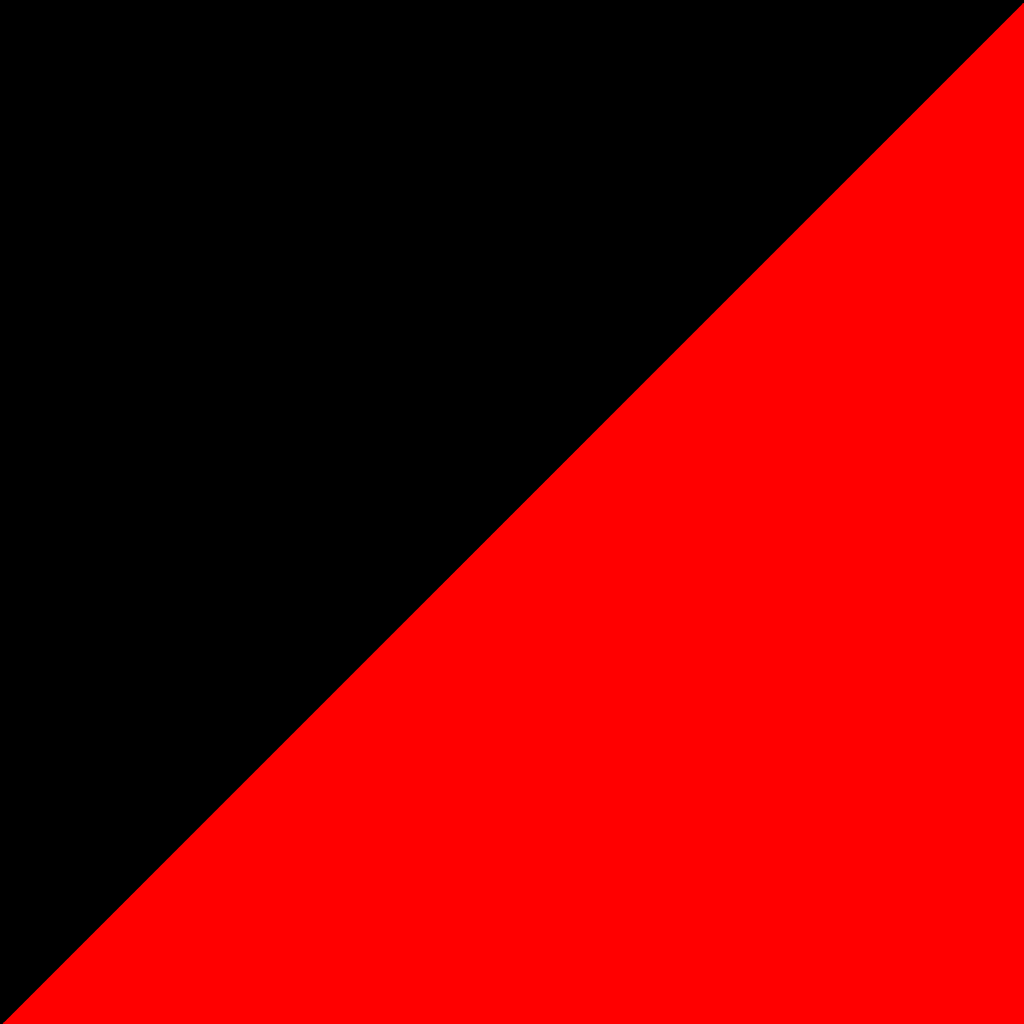

HDR means each color channel that used 8 bits can now use 10 bits, sometimes more. That means an increase of 256 shades per channel to 1024, allowing a higher range of shades to be displayed in the same picture, and avoiding the color banding problem:

I have never seen banding before, the image seems specifically picked to show the effect. I know it’s common when converting to less than 256 but color, e.g. if you turn images into svgs for some reason, or gifs (actual gifs, not video)

Also dithering exists.

Anyway, it’ll surely be standard at some point in the future, but it’s very much a small quality improvement and not something one definitely needs.

the image seems specifically picked to show the effect

Well, of course it is.

Banding is more common in synthetic gradients though, like games and webpages. A really easy way to see it is using a css gradient as a web page background.

Fair enough. Dithering would still be an option though. But if it’s not done I agree there can be visible stripes in some cases.

Also I wanted to apologize for the negative wording in my above comment. That was uncalled for, even if I think HDR is totally not worth it at the moment.

the image seems specifically picked to show the effect.

Yeah, they’ve reduced the colour depth the show off the effect without requiring HDR already.

I find it a lot more noticeable in darker images/videos, and places where you’re stuck with a small subset of the total colour depth.

That’s just 10 bit color, which is a thing and does reduce banding but is only a part of the various HDR specs. HDR also includes a significantly increased color space, as well as varying methods (depending on what spec you’re using) of mapping the mastered video to the capabilities of the end user’s display. In addition, to achieve the wider color gamut required HDR displays require some level of local dimming to increase the contrast by adjusting the brightness of various parts of the image, either through backlight zones in LCD technologies or by adjusting the brightness per pixel in LED technologies like OLED.

Thank you.

I assume HDR has to be explicitly encoded into images (and moving images) then to have true HDR, otherwise it’s just upsampled? If that’s the case, I’m also assuming most media out there is not encoded with HDR, and further if that’s correct, does it really make a difference? I’m assuming upsampling means inferring new values and probably using gaussian, dithering, or some other method.

Somewhat related, my current screens support 4k, but when watching a 4k video at 60fps side by side on a screen at 4k resolution and another 1080p resolution, no difference could be seen. It wouldn’t surprise me if that were the same with HDR, but I might be wrong.

Anti Commercial AI thingy

Inserted with a keystroke running this script on linux with X11

#!/usr/bin/env nix-shell #!nix-shell -i bash --packages xautomation xclip sleep 0.2 (echo '::: spoiler Anti Commercial AI thingy [CC BY-NC-SA 4.0](https://creativecommons.org/licenses/by-nc-sa/4.0/) Inserted with a keystroke running this script on linux with X11 ```bash' cat "$0" echo '``` :::') | xclip -selection clipboard xte "keydown Control_L" "key V" "keyup Control_L"yes, from the capture (camera) all the way to distribution the content has to preserve the HDR bit depth. Some content on YouTube is in HDR (that is noted in the quality settings along with 1080p, etc), but the option only shows if both the content is HDR and the device playing it has HDR capabilities.

Regarding streaming, there is already a lot of HDR content out there, especially newer shows. But stupid DRM has always pushed us to alternative sources when it comes to playback quality on Linux anyway.

no difference could be seen

If you’re not seeing difference of 4K and 1080p though, even up close, maybe your media isn’t really 4k. I find the difference to be quite noticeable.

yes, from the capture (camera) all the way to distribution the content has to preserve the HDR bit depth.

Ah, that’s what I thought. Thanks.

If you’re not seeing difference of 4K and 1080p though, even up close, maybe your media isn’t really 4k. I find the difference to be quite noticeable.

I tried with the most known test video Big Buck Bunny. Their website is now down and the internet archive has it, but I did the test back when it was up. Also found a few 4k videos on youtube and elsewhere. Maybe me and the people I tested it with aren’t sensitive to 4k video on 30-35 inch screens.

Anti Commercial AI thingy

Inserted with a keystroke running this script on linux with X11

#!/usr/bin/env nix-shell #!nix-shell -i bash --packages xautomation xclip sleep 0.2 (echo '::: spoiler Anti Commercial AI thingy [CC BY-NC-SA 4.0](https://creativecommons.org/licenses/by-nc-sa/4.0/) Inserted with a keystroke running this script on linux with X11 ```bash' cat "$0" echo '``` :::') | xclip -selection clipboard xte "keydown Control_L" "key V" "keyup Control_L"aren‘t sensitive to 4K video

So you’re saying you need glasses?

But yes, it does make a difference how much of your field of view is covered. If it’s a small screen and you’re relatively far away, 4K isn’t doing anything. And of course, you need a 4K capable screen in the first place, which is still not a given gor PC monitors, precisely due to their size. For a 21" desktop monitor, it’s simply not necessary. Although I‘d argue, less than 4K on a 32" screen, that’s like an arms length away from you (like on a desktop), is noticeably low res.

So you’re saying you need glasses?

No. Just like some people aren’t sensitive to 3D movies, we aren’t sensitive to 4k 🤷

Anti Commercial AI thingy

Inserted with a keystroke running this script on linux with X11

#!/usr/bin/env nix-shell #!nix-shell -i bash --packages xautomation xclip sleep 0.2 (echo '::: spoiler Anti Commercial AI thingy [CC BY-NC-SA 4.0](https://creativecommons.org/licenses/by-nc-sa/4.0/) Inserted with a keystroke running this script on linux with X11 ```bash' cat "$0" echo '``` :::') | xclip -selection clipboard xte "keydown Control_L" "key V" "keyup Control_L"People aren’t “sensitive” to 3D movies due to lack of stereoscopic vision (That’s typical for people who were cross-eyed from birth for example, even if they had corrective surgery). Or they can see them and don’t care or don’t like the effect.

If you’re not “sensitive” to 4K, that would suggest you‘re not capable of perceiving fine details and thus you do not have 20/20 vision. Given of course, you were looking at 4K content on a 4K screen in a size and distance, where the human eye should generally be capable of distinguishing those details.

HDR is not just marketing. And, unlike what other commenters have said, it’s not (primarily) about larger colour bitrate. That’s only a small part of it. The primary appeal is the following:

Old CRTs and early LCDs had very little brightness and very low contrast. Thus, video mastering and colour spaces reflected that. Most non HDR (“SDR”) films and series are mastered with a monitor brightness of 80-100nits in mind (depending on the exact colour space), so the difference between the darkest and the brightest part of the image can also only be 100nits. That’s not a lot. Even the cheapest new TVs and monitors exceed that by more than double. And of course, you can make the image brighter and artificially increase the contrast but that‘s the same as upsampling DVDs to HD or HD to 4K.

What HDR set out to do was providing a specification for video mastering, that takes advantage of modern display technology. Modern LCDs can get as bright as multiple thousands of nits and OLEDs have near infinite contrast ratios. HDR provides a mastering process with usually 1000-2000nits peak brightness (depending on the standard), thus also higher contrast (the darkest and brightest part of the image can be over 1000 nits apart).

Of course, to truly experience HDR, you’ll need a screen that can take advantage of it. OLED TVs, bright LCDs with local dimming zones (to increase the possible contrast), etc. It is possible to profit from HDR even on screens that aren’t as good (my TV is an LCD without local dimming and only 350nit peak brightness and it does make a noticeable difference although not a huge one) but for “real” HDR you’d need something better. My monitor for example is Vesa DisplayHDR 600 certified, meaning it has a peak brightness of 600nits plus a number of local dimming zones and the difference in supported games is night and day. And that’s still not even near the peak of HDR capabilities.

tl;dr: HDR isn‘t just marketing or higher colour depth. HDR video is mastered to the increased capabilities of modern displays, while SDR (“normal”) content is not.

It’s more akin to the difference between DVD and BluRay. The difference is huge, as long as you have a screen that can take advantage.

Been watching this drama about HDR for a year now, and still can’t be arsed to read up on what it is.

HDR makes stuff look really awesome. It’s super good for real.

HDR or High Dynamic Range is a way for images/videos/games to take advantage of the increased colour space, brightness and contrast of modern displays. That is, if your medium, your player device/software and your display are HDR capable.

HDR content is usually mastered with a peak brightness of 1000nits or more in mind, while Standard Dynamic Range (SDR) content is mastered for 80-100nit screens.

How is this a software problem? Why can’t the display server just tell the monitor "make this pixel as bright as you can (255) and this other pixel as dark as you can (0)?

In short: Because HDR needs additional metadata to work. You can watch HDR content on SDR screens and it’s horribly washed out. It looks a bit like log footage. The HDR metadata then tells the screen how bright/dark the image actually needs to be. The software issue is the correct support for said metadata.

I‘d speculate (I’m not an expert) that the reason for this is, that it enables more granularity. Even the 1024 steps of brightness 10bit colour can produce is nothing compared to the millions to one contrast of modern LCDs or even near infinite contrast of OLED. Besides, screens come in a number of peak brightnesses. I suppose doing it this way enables the manufacturer to interpret the metadata to look more favorably on their screens.

And also, with your solution, a brightness value of 1023 would always be the max brightness of the TV. You don’t always want that, if your TV can literally flashbang you. Sure, you want the sun to be peak brightness, but not every white object is as bright as the sun… That’s the true beauty of a good HDR experience. It looks fairly normal but reflections of the sun or the fire in a dark room just hit differently, when the rest of the scene stays much darker yet is still clearly visible.

Here is an alternative Piped link(s):

Piped is a privacy-respecting open-source alternative frontend to YouTube.

I’m open-source; check me out at GitHub.

Nah, I don’t Need HDR

HDR is like RGB, sometimes cool if done really well but usually just a useless selling point.

For a second there I thought you were advocating for fluorescent green or monochromatic CRT screens of old.

Those are very cool tho, i still just barely got to experience those at home :D

Yes, I’ll take 8!

40,320?

Nah good old 255 (8-bit obviously)

Yeah I’ll stick with my monochrome 2 color display for now.

without any interruption to gaming compability I definitely don’t want to switch sorry.

HDR is cool and I look forward to getting that full game compability and eventually making the switch but it’s just not there yet

Deleted

I’m not touching Wayland until it has feature parity with X and gets rid of all the weird bugs like cursor size randomly changing and my jelly windows being blurry as hell until they are done animating

Not sure why your getting down voted, I wish I could switch, but only X works reliability.

have you tried plasma 6?

have you tried plasma 6?

It’s not ready yet.

The protocol for apps/games to make use of it is not yet finalized.

I can play games and watch videos in HDR though

deleted by creator

HDR? Ah, you mean when videos keep flickering on Wayland!

I will switch when I need a new GPU.

videos? everything flickers for me on wayland. X.org is literally the only thing keeping me from switching back to windows right now.

Weird. I have Nvidia and I’m using Wayland and I’ve never had these flickering issues. But seems to be a common complaint, so I think I got lucky.

I’ve seen it in Steam off the top of my head but not much else.

Happens on both my NVIDIA machines, my Intel machines see no such behaviour.

I’m running it on laptop with Intel+Nvidia hybrid graphics. I had Steam on iGPU for ages before I realized, recently switched it to Nvidia but haven’t seen the flickering. I remember someone mentioning that it might have something to do with mouse polling rates or something, but I have a really cheap mouse so I might be too cheap to face that issue haha.

No issues in games, it’s the steam client which has issues.

Now that I remember Firefox extensions too are having issues with overlays (bitwarden) but I’ve not updated my system in a couple of weeks which might to be to blame here.

For me it’s just games, I’m guessing it’s an Nvidia GPU? I hope explicit sync helps with that.

i’m kind of waiting for an implementation. The “protocol” is useless to me by itself

All of them are already merged. You just have to wait for it to trickle down to whatever you’re using.

deleted by creator

Now that explicit sync has been merged this will be a thing of the past

And it was never a thing on AMD GPUs.

How will HDR affect the black screen I get every time I’ve tried to launch KDE under Wayland?

It will make your screen blacker.

But it all seriousness, your display manager might not support Wayland. Try something like ssdm.

I’m using SDDM and it lets me select Wayland as an option just fine, Just my Nvidia card won’t load my desktop then.

I’m going full AMD next time I upgrade but that’s gonna be a while away…

Nothing you can do then. Stay on x11 until nvidia get their shit together.

They just did now that explicit sync is merged everywhere except wlroots

deleted by creator

It should work with the latest Nvidia driver. It does for me.

how on earth do I know what DM I’m using? Does it come with the compositor? I just start hyprland from the terminal without adding anything to systemd.

Then you aren’t using one

Oh, lightdm has some trouble sometimes on my other computer, are you saying that I could somehow start i3 without it when it breaks itself?

You could start it directly from tty with startx https://wiki.archlinux.org/title/Xinit

.

may 15 Arch users are going to be down loading the NoVideo Wayland Driver.

Smart Nvidia users are ex Nvidia users

Financially smart Nvidia users are Nvidia users

I don’t get what you mean by that

GPU’s are expensive. lots of people can’t afford to ditch nvidia

Ok, makes sense. I thought you meant that nvidia gpus were cheaper than amd ones, which is obviously not true.

I think that may have been the case early into the Nvidia 30-series but now they seem closer in price and performance so I’m pretty sure my next card will be from AMD when I decide I need an upgrade.

I’m a happy Nvidia user on Wayland. Xorg had a massive bug that forced me to try out Wayland it has been really nice and smooth. I was surprised, seeing all the comments. But I might’ve just gotten lucky.

I tried wayland with the newest nvidia driver on Arch and it was unusable and extremely unstable.

I’m somewhere in between. X11 doesn’t work with linux-lts (arch) for me and Wayland stutters occasionally but not always. Usually it happens exclusively in games, some reliably, some when I switch windows and in some when I move around or increase game speed. But I’m excited for explicit sync

Actually wait until the next de releases hit repos, all the nvidia problems just got solved

Ah yes, the next release will always solve all problems since 5 years.

??? I have been following this for years and nobody I have seen has ever said that with nvidia on wayland

either way it has been tested and actually does so…

Then you didn’t follow closely enough. Nvidia comes up with EGLStreams and Gnome and Plasma accept patches to support this: next release will fix all problems.

Nvidia driver supports GBM: next release will fix all problems.

Only explicit sync is missing but once adopted surely all problems will be fixed.

It’s always one last feature that’s missing for perfect Wayland support…🤦

Nobody thought eglstreams was a good idea or a solution, gbm fixed being able to use wayland at all, no devs were saying that would resolve all the issues. The issues are currently solved, you can test the changes yourself if you don’t believe me, but this truly is the end

i’m not saying wait for the next release because they might solve it, I’m saying the current set of patches is confirmed to solve it.

Sorry, I won’t test this myself because I’ll never combine Nvidia with Linux for years to come but all the time it was promised that X11 fallback for Nvidia would no longer be needed and everytime it was followed up by countless bug reports that basic features aren’t working.

That’s the difference between some randos promising it and the devs extensively testing it and confirming it works universally.

Obviously it won’t be all of them but I too am very excited about not having to get lucky with my games flickering or not.

What? Tell me more. Now! Please

here’s an article explaining the changes, that article was written before they were merged, but they’re merged everywhere now except wlroots. That’s coming soon too.

deleted by creator

Jokes on you I use NVIDIA

*Cries*

Network transparency OR BUST

deleted by creator

Ok, then buy an rtx 4090 for every computer in the house

deleted by creator

You misunderstand, I don’t want crap graphics on every computer, I want the 4090 driving every computer without having to buy one per computer.

That’s what you could do with network transparency.

deleted by creator

PCs were not intended to have more than 640kb of ram and yet.

The blame can squarely be placed on nvidia for this decrepitude of X11 and its functionality which is in contradiction of nvidia’s unlimited profit ambitions.

RDP is the anachronism. Why would I want to stream a whole desktop environement with its own separate taskbar, clock, whole user environement. Especially given how janky and laggy it is.

No, I want to stream -just- the application, it should use my system’s color and temperature scheme, interoperate clipboard and drag&drop, be basically indistinguishable from a locally running app, except streaming at 500mbps AV1 hardware encoded, 12 ms latency max, 16k resolution, yes this is not a typo, 16 bit hdr, hdr that actually works, the sounds works too, works every time, yes 8 channel 192khz 24 bit lossless. Also capable of pure IP multicast streaming. Yes that means one application instance visible on multiple computer, at the same time and can be interacted with multiple users at the same time with -no- need for the app to be aware if any of this.

Do that with no jank and I’ll sing wayland’s praises.

deleted by creator

And that’s with functional copy&paste, drag&drop ? Basically indistinguishable from a local app ?

Yes, just go try and see for yourself.

You want to win me over? For starters, provide a layer that supports all hooks and features in

xdotoolandwmctrl. As I understand it, that’s nowhere near present, and maybe even deliberately impossible “for security reasons”.I know about

ydotoolanddotool. They’re something but definitely not drop-in replacements.Unfortunately, I suspect I’ll end up being forced onto Wayland at some point because the easy-use distros will switch to it, and I’ll just have to get used to moving and resizing my windows manually with the mouse. Over and over. Because that’s secure.

deleted by creator

I think the Wayland transition will not be without compromises

May I ask why you don’t use tiling window managers if you don’t like to move windows with the mouse?

Unfortunately, I suspect I’ll end up being forced onto Wayland at some point because the easy-use distros will switch to it, and I’ll just have to get used to moving and resizing my windows manually with the mouse. Over and over. Because that’s secure.

I think you were being sarcastic but it is more secure. Less convenient though.

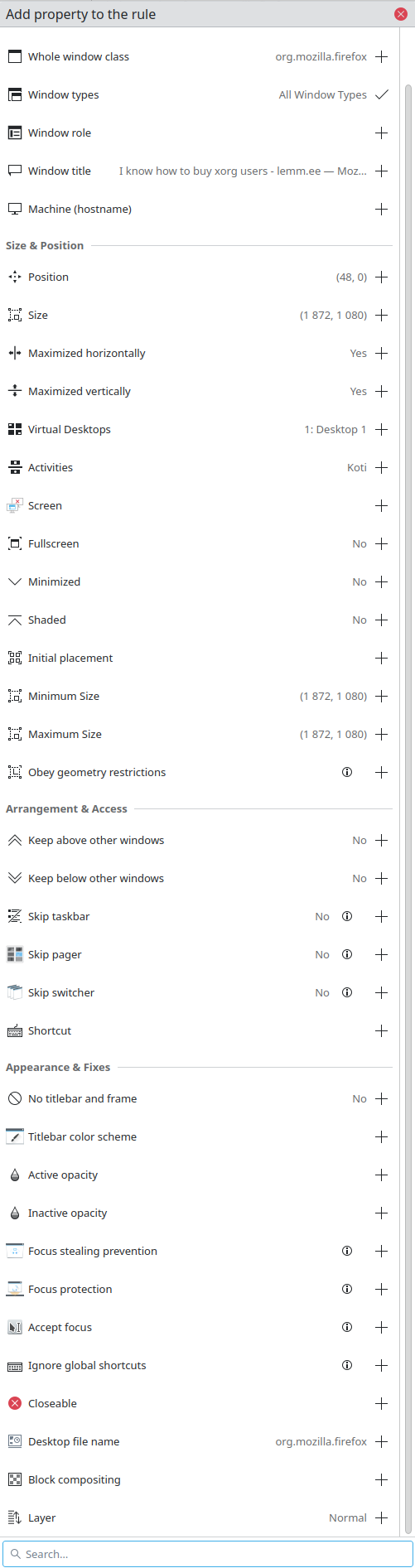

I’m not sure if that’s what you’re looking for but KDE has nice window rules that can affect all sorts of settings. Placement, size, appearance etc. Lot of options. And you can match them per specific windows or the whole application etc. I use it for few things, mostly to place windows on certain screens and in certain sizes.

Me, not much of a gamer and not a movie buff and having no issues with the way monitors have been displaying things for the past 25 years: No.

When I could no longer see the migraine-inducing flicker while being irradiated by a particle accelerator shooting a phosphor coated screen in front of my face, I was good to go.

It was exciting when we went from green/amber to color!

You already had me at “144hz on one monitor and 60hz on the other so I can enjoy the nice monitor without having to buy a new secondary one.”

Option "AsyncFlipSecondaries" "true"