I’ll take “things business people dont understand” for 100$.

No one hires software engineers to code. You’re hired to solve problems. All of this AI bullshit has 0 capability to solve your problems, because it can only spit out what it’s already

stolen fromseen somewhere elseI’ve worked with a few PMs over my 12 year career that think devs are really only there to code like trained monkeys.

I’m at the point where what I work on requires such a depth of knowledge that I just manage my own projects. Doesn’t help that my work’s PM team consistently brings in new hires only to toss them on the difficult projects no one else is willing to take. They see a project is doomed to fail so they put their least skilled and newest person on it so the seniors don’t suffer any failures.

Simplifying things to a level that is understandable for the PMs just leads to overlooked footguns. Trying to explain a small subset of the footguns just leads to them wildly misinterpreting what is going on, causing more work for me to sort out what terrible misconceptions they’ve blasted out to everyone else.

If you can’t actually be a reliable force multiplier, or even someone I can rely on to get accurate information from other teams, just get out of my way please.

It can also throw things against the wall with no concern for fitness-to=purpose. See “None pizza, left beef”.

Cloud tech seller sells cloud

Can I join anyone’s band of AI server farm raiders 24 months from now? Anyone forming a group? I will bring my meat bicycle.

Let’s assume this is true, just for discussion’s sake. Who’s going to be writing the prompts to get the code then? Surely someone who can understand the requirements, make sure the code functions, and then test it afterwards. That’s a developer.

I don’t believe for a single instance that what he says is going to happen, this is just a play for funding… But if it were to happen I’m pretty sure most companies would hire anything that moves for those jobs. You have many examples of companies offloading essential parts of their products externally.

I’ve also seen companies hiring tourism graduates (et al non engineering related) giving them a 3/4 week programming course, slapping a “software engineer” sticker on them and off they are to work on products they have no experience to work on. Then it’s up to senior engineers to handle all that crap.

This explains so much about 1 in 4 IT people I meet.

No, going by them, they just talk to an AI voice and it will pop out a finished product.

I think that’s the point? They’re saying that those coders will turn into prompt engineers. They didn’t say they wouldn’t have a job, just that they wouldn’t be “coding”.

Which I don’t believe for a minute. I could see it eventually, but it’s not “2 years” away by any stretch of the imagination.

Definitely be coding less I think. Coding or programming is basically the “grunt work”. The real skill is understanding requirements and translating that into some product.

Possibly. But… Here’s the thing. I’ve dealt with “business rules” engines before at a job. I used a few different ones. The idea is always to make coding simpler so non technical people can do it. Unless you couldn’t tell from context, I’m a software engineer lol. I was the one writing and troubleshooting those tools. And it was harder than if it was just in a “normal” language like Java or whatever.

I have a soft spot for this area and there’s a non zero chance this comment makes me obsess over them again for a bit lol. But the point I’m making is that “normal” coding was always better and more useful.

It’s not a perfect comparison because LLMs output “real” code and not code that is “Scratch-like”, but I just don’t see it happening.

I could see using LLMs exclusively over search engines (as a first place to look that is) in 2 years. But we’ll see.

deleted by creator

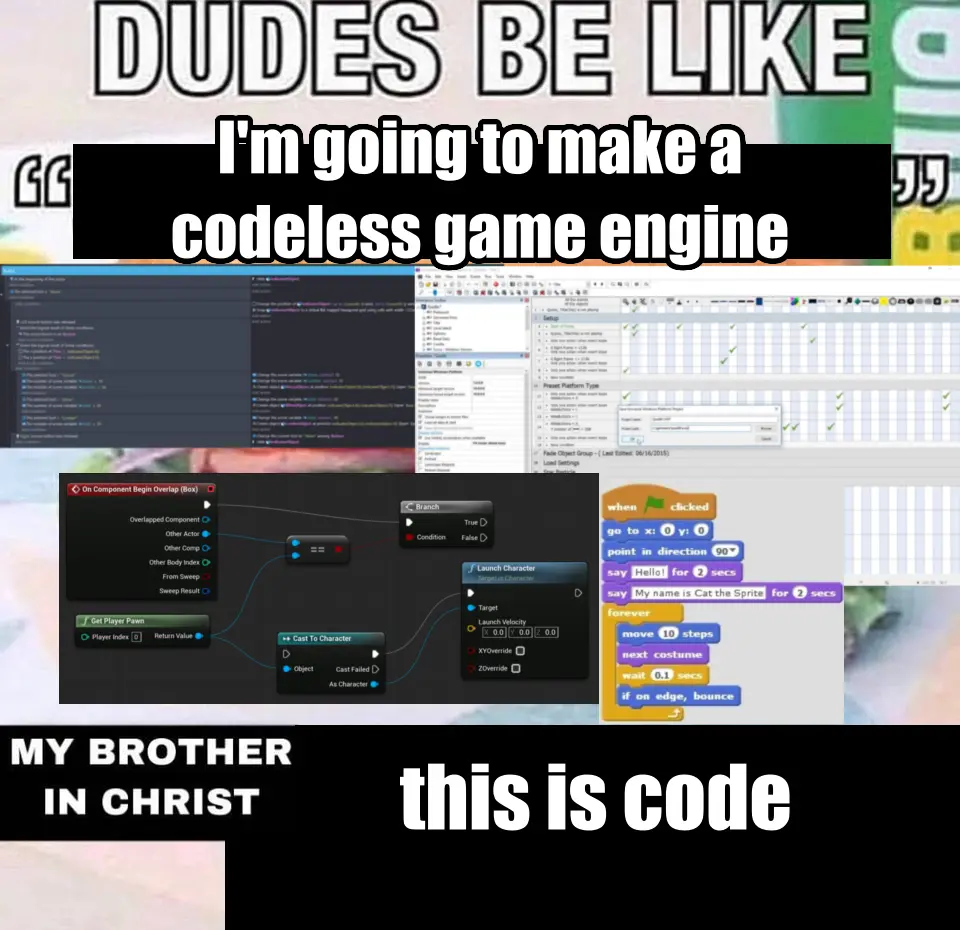

I made this meme a while back and I think it’s relevant

Looking at your examples, and I have to object at putting scratch in there.

My kids use it in clubs, and it’s great for getting algorithmic basics down before the keyboard proficiency is there for real coding.

that’s just how the code is rendered. There’s still all the usual constructs

It’s still code. What makes scratch special is that it structurally rules out syntax errors while still looking quite like ordinary code. Node editors – I have a love and hate relationship with them. When you’re in e.g. Blender throwing together a shader it’s very very nice to have easy visualisation of literally everything, but then you know you want to compute

abs(a) + sin(b) + c^2and yep that’s five nodes right there because apparently even the possibility to type in a formula is too confusing for artists. Never mind that Blender allows you to input formulas (without variables though) into any field that accepts a number.

The only people who would say this are people that don’t know programming.

LLMs are not going to replace software devs.

I don’t know if you noticed but most of the people making decisions in the industry aren’t programmers, they’re MBAs.

Irrelevant, anyone who tries to replace their devs with LLMs will crash and burn. The lessons will be learned. But yes, many executives will make stupid ass decisions around this tech.

It’s really sad how even techheads ignore how rapidly LLM coding has come in the last 3 years and what that means in the long run.

Just look how rapidly voice recognition developed once Google started exploiting all of its users’ voice to text data. There was a point that industry experts stated ‘There will never be a general voice recognition system that is 90%+ across all languages and dialects.’ And google made one within 4 years.

The natural bounty of a no-salary programmer in a box is too great for this to ever stop being developed, and the people with the money only want more money, and not paying devs is something they’ve wanted since the coding industry literally started.

Yes its terrible now, but it is also in its infancy, like voice recognition in the late 90s it is a novelty with many hiccoughs. That won’t be the case for long and anyone who confidently thinks it can’t ever happen will be left without recourse when it does.

But that’s not even the worst part about all of this but I’m not going into black box code because all of you just argue stupid points when I do but just so you know, human programming will be a thing of the past outside of hobbyists and ultra secure systems within 20 years.

Maybe sooner

Maybe in 20 years. Maybe. But this article is quoting CEOs saying 2 years, which is bullshit.

I think it’s just as likely that in 20 years they’ll be crying because they scared enough people away from the career that there aren’t enough developers, when the magic GenAI that can write all code still doesn’t exist.

yeah 2 years is bullshit but with innovation, 10 years is still reasonable and fucking terrifying.

The one thing that LLMs have done for me is to make summarizing and correlating data in documents really easy. Take 20 docs of notes about a project and have it summarize where they are at so I can get up to speed quickly. Works surprisingly well. I haven’t had luck with code requests.

Wrong, this is also exactly what people selling LLMs to people who can’t code would say.

It’s this. When boards and non-tech savvy managers start making decisions based on a slick slide deck and a few visuals, enough will bite that people will be laid off. It’s already happening.

There may be a reckoning after, but wall street likes it when you cut too deep and then bounce back to the “right” (lower) headcount. Even if you’ve broken the company and they just don’t see the glide path.

It’s gonna happen. I hope it’s rare. I’d argue it’s already happening, but I doubt enough people see it underpinning recent lay offs (yet).

That’s not what was said. He specifically said coding.

I can see the statement in the same way word processing displaced secretaries.

There used to be two tiers in business. Those who wrote ideas/solutions and those who typed out those ideas into documents to be photocopied and faxed. Now the people who work on problems type their own words and email/slack/teams the information.

In the same way there are programmers who design and solve the problems, and then the coders who take those outlines and make it actually compile.

LLM will disrupt the programmers leaving the problem solvers.

There are still secretaries today. But there aren’t vast secretary pools in every business like 50 years ago.

There is no reason to believe that LLM will disrupt anyone any time soon. As it stands now the level of workmanship is absolutely terrible and there are more things to be done than anyone has enough labor to do. Making it so skilled professionals can do more literally just makes it so more companies can produce quality of work that is not complete garbage.

Juniors produce progressively more directly usable work with reason and autonomy and are the only way you develop seniors. As it stands LLM do nothing with autonomy and do much of the work they do wrong. Even with improvements they will in near term actually be a coworker. They remain something you a skilled person actually use like a wrench. In the hands of someone who knows nothing they are worth nothing. Thinking this will replace a segment of workers of any stripe is just wrong.

I wrote a comment about this several months ago on my old kbin.social account. That site is gone and I can’t seem to get a link to it, so I’m just going to repost it here since I feel it’s relevant. My kbin client doesn’t let me copy text posts directly, so I’ve had to use the Select feature of the android app switcher. Unfortunately, the comment didn’t emerge unscathed, and I lack the mental energy to fix it due to covid brain fog (EDIT: it appears that many uses of

Iwere not preserved). The context of the old post was about layoffs, and it can be found here: https://kbin.earth/m/[email protected]/t/12147I want to offer my perspective on the Al thing from the point of view of a senior individual contributor at a larger company. Management loves the idea, but there will be a lot of developers fixing auto-generated code full of bad practices and mysterious bugs at any company that tries to lean on it instead of good devs. A large language model has no concept of good or bad, and it has no logic. happily generate string- templated SQL queries that are ripe for SQL injection. I’ve had to fix this myself. Things get even worse when you have to deal with a shit language like Bash that is absolutely full of God awful footguns. Sometimes you have to use that wretched piece of trash language, and the scripts generated are horrific. Remember that time when Steam on Linux was effectively running rm -rf /* on people’s systems? I’ve had to fix that same type of issue multiple times at my workplace.

I think LLMs will genuinely transform parts of the software industry, but I absolutely do not think they’re going to stand in for competent developers in the near future. Maybe they can help junior developers who don’t have a good grasp on syntax and patterns and such. I’ve personally felt no need to use them, since spend about 95% of my time on architecture, testing, and documentation.

Now, do the higher-ups think the way that do? Absolutely not. I’ve had senior management ask me about how I’m using Al tooling, and they always seem so disappointed when I explain why I personally don’t feel the need for it and what feel its weaknesses are. Bossman sees it as a way to magically multiply IC efficiency for nothing, so absolutely agree that it’s likely playing a part in at least some of these layoffs.

Basically, I think LLMs can be helpful for some folks, but my experience is that the use of LLMs by junior developers absolutely increases the workload of senior developers. Senior developers using LLMs can experience a productivity bump, but only if they’re very critical of the output generated by the model. I am personally much faster just relying on traditional IDE auto complete, since I don’t have to change from “I’m writing code” mode to “I’m reviewing code mode.”

The one colleague using AI at my company produced (CUDA) code with lots of memory leaks that required two expert developers to fix. LLMs produce code based on vibes instead of following language syntax and proper coding practices. Maybe that would be ok in a more forgiving high level language, but I don’t trust them at all for low level languages.

I was trying to use it to write a program in python for this macropad I bought and I have yet to get anything usable out of it. It got me closer than I would have been by myself and I don’t have a ton of coding experience so it’s problems are probably partially on me but everything it’s given me has required me to correct it to work.

Will there even be a path for junior level developers?

The same one they have now, perhaps with a steeper learning curve. The market for software developers is already saturated with disillusioned junior devs who attended a boot camp with promises of 6 figure salaries. Some of them did really well, but many others ran headlong into the fact that it takes a lot more passion than a boot camp to stand out as a junior dev.

From what I understand, it’s rough out there for junior devs in certain sectors.

The problem with this take is the assertion that LLMs are going to take the place of secretaries in your analogy. The reality is that replacing junior devs with LLMs is like replacing secretaries with a network of typewriter monkeys who throw sheets of paper at a drunk MBA who decides what gets faxed.

I’m saying that devs will use LLM’s in the same way they currently use word processing to send emails instead of handing hand written notes to a secretary to format, grammar/spell check, and type.

No

I thought by this point everyone would know how computers work.

That, uh, did not happen.

Good take

It’ll have to improve a magnitude for that effect. Right now it’s basically an improved stack overflow.

…and only sometimes improved. And it’ll stop improving if people stop using Stack Overflow, since that’s one of the main places it’s mined for data.

Nah, it’s built into the editors and repos these days.

?

If no one uses Stack Overflow anymore, then no one posts new answers. So AI has no new info to mine.

They are mining the IDE and GitHub.

You seem to be missing what I’m saying, and missing my point. But I’m not going to try to rephrase it again.

AI as a general concept probably will at some point. But LLMs have all but reached the end of the line and they’re not nearly smart enough.

LLMs have already reached the end of the line 🤔

I don’t believe that. At least from an implementation perspective we’re extremely early on, and I don’t see why the tech itself can’t be improved either.

Maybe it’s current iteration has hit a wall, but I don’t think anyone can really say what the future holds for it.

we’re extremely early on

Oh really! The analysis has been established since the 80’s. Its so far from early on that statement is comical

Transformers, the foundation of modern “AI”, was proposed in 2017. Whatever we called “AI” and “Machine Learning” before that was mostly convolutional networks inspired by the 80’s “Neocognitron”, which is nowhere near as impressive.

The most advanced thing a Convolutional network ever accomplished was DeepDream, and visual Generative AI has skyrocketed in the 10 years since then. Anyone looking at this situation who believes that we have hit bedrock is delusional.

From DeepDream to Midjourney in 10 years is incredible. The next 10 years are going to be very weird.

I’m not trained in formal computer science, so I’m unable to evaluate the quality of this paper’s argument, but there’s a preprint out that claims to prove that current computing architectures will never be able to advance to AGI, and that rather than accelerating, improvements are only going to slow down due to the exponential increase in resources necessary for any incremental advancements (because it’s an NP-hard problem). That doesn’t prove LLMs are end of the line, but it does suggest that additional improvements are likely to be marginal.

LLMs have been around since roughly

20162017 (comment below corrected me that Attention paper was 2017). While scaling the up has improved their performance/capabilities, there are fundamental limitations on the actual approach. Behind the scenes, LLMs (even multimodal ones like gpt4) are trying to predict what is most expected, while that can be powerful it means they can never innovate or be truth systems.For years we used things like tf-idf to vectorize words, then embeddings, now transformers (supped up embeddings). Each approach has it limits, LLMs are no different. The results we see now are surprisingly good, but don’t overcome the baseline limitations in the underlying model.

The “Attention Is All You Need” paper that birthed modern AI came out in 2017. Before Transformers, “LLMs” were pretty much just Markov chains and statistical language models.

You’re right, I thought that paper came out in 2016.

“at some point” being like 400 years in the future? Sure.

Ok that’s probably a little bit of an exaggeration. 250 years.

It’ll replace brain dead CEOs before it replaces programmers.

I’m pretty sure I could write a bot right now that just regurgitates pop science bullshit and how it relates to Line Go Up business philosophy.

Edit: did it, thanks ChatJippity

def main(): # Check if the correct number of arguments are provided if len(sys.argv) != 2: print("Usage: python script.py <PopScienceBS>") sys.exit(1) # Get the input from the command line PopScienceBS = sys.argv[1] # Assign the input variable to the output variable LineGoUp = PopScienceBS # Print the output print(f"Line Go Up if we do: {LineGoUp}") if __name__ == "__main__": main()ChatJippity

I’ll start using that!

if lineGoUp { CollectUnearnedBonus() } else { FireSomePeople() CollectUnearnedBonus() }I think we need to start a company and commence enshittification, pronto.

This company - employee owned, right?

I’m just going to need you to sign this Contributor License Agreement assigning me all your contributions and we’ll see about shares, maybe.

Yay! I finally made it, I’m calling my mom.

I love how even here there’s line metric coding going on

Says the person who is primarily paid with Amazon stock, wants to see that stock price rise for their own benefit, and won’t be in that job two years from now to be held accountable. Also, who has never written a kind of code. Yeah…. Ok. 🤮

Sure, Microsoft is happy to let their AIs scan everyone else’s code., but is anyone aware of any software houses letting AIs scan their in-house code?

Any lawyer worth their salt won’t let AIs anywhere near their company’s proprietary code intil they are positive that AI isn’t going to be blabbing the code out to every one of their competitors.

But of course, IANAL.

The LLMs they train on their code will only be accessible internally. They won’t leak their own intellectual property.

Will that not be more experiensive than having developers?

Yeah which is why this is a dumb statement from Amazon. But then again I don’t expect C-suite managers to really understand the intricacies of their own companies.

Of course not. It will be more expensive and they’ll still have to pay developers to figure out what’s wrong with their AI code.

Possibly. It’s hard to know without seeing the numbers and assessing output quality and volume.

Also it’s not unheard of that some bigwig wastes millions of company €€ for some project they fancy. (Billions if they happen to be Elon)

Depends on the use case. Training local llms is a lot cheaper after Galore and there are ways to get useful local models with only a moderate amount of effort, see e.g. augmentoolkit.

This may or may not be practical in many use cases.

24 months is pretty generous but no doubt there will be significantly less demand for junior developers in the near future.

If only we had an overarching structure that everyone in society has agreed exists for the purposes of enforcing laws and regulating things. Something that governs people living in a region… Maybe then they could be compelled to show exactly what they’re using, and what those models are being trained with.

Oh well.

A company I used to work for outsourced most of their coding to a company in India. I say most because when the code came back the internal teams anways had to put a bunch of work in to fix it and integrate it with existing systems. I imagine that, if anything, LLMs will just take the place of that overseas coding farm. The code they spit out will still need to be fixed and modified so it works with your existing systems and that work is going to require programmers.

So instead of spending 1 day writing good code, we’ll be spending a week debugging shitty code. Great.

This will be used as an excuse to try to drive down wages while demanding more responsibilities from developers, even though this is absolute bullshit. However, if they actually follow through with their delusions and push to build platforms on AI-generated trash code, then soon after they’ll have to hire people to fix such messes.

All the manufacturers of mechanical keyboards just cried 🥺

24 months from now? Unlikely lol

I’m sure they’ll hold strong to that prediction in 24 mo. It’s just 24 more months away

We’ll have full self driving next year.

I remember a little over a decade ago while I was still in public school hearing about super advanced cars that had self driving were coming soon, yet we’re hardly anywhere closer to that goal (if you don’t count the Tesla vehicles running red lights incidents).

In Phoenix you can take a Waymo (self driving taxi) just like an Uber. They have tons of ads and they’re everywhere on the roads.

I am in Phoenix and just took one to the airport. First time riding in a Waymo. It was uncannily good and much more confident than the FSD Tesla I’ve ridden in a few times.

I haven’t taken one yet but have several friends who have. Besides being generally good, one of the best parts is unlike Uber, there’s no chance that you have a weird driver that wants to talk to you the whole ride

A subscription to Popular Science magazine through most of my teen years did wonders for my skepticism.

We should all be switched to hydrogen fuel by now, for our public transport lines with per person carriages that can split off from the main line seamlessly at speed to go off on side routes to your individual destination, that automatically rejoin the main line when you’re done with it. They were talking about all of that pre-2010.

I think I remember the hydrogen fuel thing.

Also, fuck Popular Science for making me think there was gonna be a zombie apocalypse due to some drug that turns you into a zombie.

This is the year of the Linux Desktop

15 years at least. probably more like 30. and it will be questionable, because it will use a lot of energy for every query and a lot of resources for cooling

it will use a lot of energy for every query and a lot of resources for cooling

Well, so do coders. Coffee can be quite taxing on the environment, as can air conditioning!

That’s probably the amount of time remaining before they move on to selling the next tech buzz word to some suckers.

And just like that, they’ll forget about these previous statements as well.

I fear Elon Musk’s broken promises method is being admired and copied.

I’m curious about what the “upskilling” is supposed to look like, and what’s meant by the statement that most execs won’t hire a developer without AI skills. Is the idea that everyone needs to know how to put ML models together and train them? Or is it just that everyone employable will need to be able to work with them? There’s a big difference.

I know how to purge one off of a system, does that count?

deleted by creator

I guess the programmers should start learning how to mine coal…

We will all be given old school Casio calculators a d sent to crunch numbers in the bitcoin mines.