If that’s true, how come there isn’t a single serious project written exclusively or mostly by an LLM? There isn’t a single library or remotely original application made with Claude or Gemini. Not one.

My last employer had many internal tools that were fine.

They had only a moderate amount of oversight.

I had to find a new job, I’m actually thinking of walking away from software development now that there are so few jobs :(

It sucks but there’s no sense pretending this won’t have a large impact on the job landscape.

What did these tools do? I don’t see any LLm being used for creating anything working from scratch, without the human propmter doing most of the heavy lifting.

Mostly internal data cleaning stuff, close etc, which I accept is less in scope than you’re original comment.

The things you are describing sound like if-statement levels of automation, GitHub Actions with preprogrammed responses rather than LLM whatever.

If you’re worrying about being replaced by that… Go find the code, read it, and feel better.

The code was non trivial and relatively sophisticated. It performed statistical analysis on ingested data and the approach taken was statistically sound.

I was replaced by that. So was my colleague.

The job market is exceptionally tough right now and a large part of that is certainly llms.

I think taking people with statistical training out of the equation is quite dangerous, but it’s happening. In my area, everybody doing applied mathematics, statistics or analysis has been laid off.

In saying that, the produced program was quite good.

Certainly sounds more interesting than my original read of it! Sorry about that, I was grumpy.

All good man.

I think the point is that LLMs can replace people and they are quite good.

But they absolutely shouldn’t replace people, yet, or possibly ever.

But that’s what’s happening and it’s a massive problem because it’s leading to mediocre code in important spaces.

there isn’t a single serious project written exclusively or mostly by an LLM? There isn’t a single library or remotely original application

IMHO “original” here is the key. Finding yet another clone of a Web framework ported from one language to another in order to push online a basic CMS slightly faster, I can imagine this. In fact I even bet that LLM, because they manipulate words in languages and that code can be safely (even thought not cheaply) tested within containers, could be an interesting solution for that.

… but that is NOT really creating value for anyone, unless that person is technically very savvy and thus able to leverage why a framework in a language over another creates new opportunities (say safety, performances, etc). So… for somebody who is not that savvy, “just” relying on the numerous existing already existing open-source providing exactly the value they expect, there is no incentive to re-invent.

For anything that is genuinely original, i.e something that is not a port to another architecture, a translation to another language, a slight optimization, but rather something that need just a bit of reasoning and evaluating against the value created, I’m very skeptical, even less so while pouring less resources EVEN with a radical drop in costs.

Lets wait for any LLM do a single sucessful MR on Github first before starting a project on its own. Not aware of any.

Of course they won’t be; somebody has to debug all the crap AI writes.

If generative AI hasn’t replaced artists, it won’t replaced programmers.

Generative AI is much better at art than coding.

Generative AI is much better at art than coding.

Mostly because humans invented this convenient thing called abstract art - and since then tolerates pretty much everything that looks “strange” as art. Must have been a deep learning advocate with a time machine who came up with abstract art.

Don’t need to be abstract art, it manages to make many kinds of art.

The difference between art and coding is that if you pick a slightly different color or make a line with slightly the wrong angle, it doesn’t change much. In code, however, slight mistakes usually result in bugs.

I meant that thanks to abstract art we’re willing to forgive “image glitches” in art by deep learning models.

I don’t think that’s the case though. Obvious glitches like 6-fingered hands can be avoided by generating a bunch of samples and picking the best one, and less obvious glitches tend to be overlooked, not considered a “feature” due to an appreciation of abstract art.

AI art works best for pieces that need to fade into the background, like stock images and whatnot to accompany more important copy. If it’s taking center stage, it needs a lot more hand-holding that probably makes it about as costly as just having a human create it.

It will never replace artists anyway.

Art isn’t just about what it looks, like it’s also about an emotional connection. Inherently we think that you cannot have an emotional connection with something created by a computer. Humans will always prefer art created by humans, even if objectively there isn’t a lot of difference.

Also, it seems to only do digital art.

The problem is that not everyone looks for that human-to-human emotional connection in art. For some, it’s just a part of a much bigger whole.

For example, if you’re an indie game dev with a small budget and no artistic skills, you may not be that scrupulous about getting an AI to generate some sprites or 3D models for you, if the alternative is to commission the art assets with money you don’t have.

Similar idea applies to companies building a website. Why pay for a licence to download some stock images or design assets if you can just get a GenAI to pump out hundreds for you that are very convincing (and probably even better) for a couple bucks?

Sure, but those jobs are often pretty low-paid, like on fiverr or something. But for anything with a broader impact, like AAA games, large corporations, or public art, you’ll commission a professional artist. AI works fine for low-budget projects and as a stand-in for works in progress, but it’s not replacing human artists anytime soon, though it may assist artists (e.g. in producing mockups and whatnot quickly).

Cloud tech seller sells cloud

He who knows, does not speak. He who speaks, does not know.

–Lao Tzu…

What does the person “who knows” do when they have to give a presentation?

Great answer to interview questions

Can I join anyone’s band of AI server farm raiders 24 months from now? Anyone forming a group? I will bring my meat bicycle.

Good luck debugging AI-generated code…

Really simple. Just ask it to point out the error. Also maybe tell it how the code is wrong. And then hope that the new code didn’t introduce new errors in formerly working sections. And that it understood what you meant. In a language that is inherently vague.

They think it will be easier than having people write the code from scratch. I don’t know shit about coding but I know that’s definitely not right.

AI is quite good at writing small sections of code. Usually because it’s more or less just copying something off the internet that it’s found, maybe changing a few bits around, but essentially just regurgitating something that’s in its data set. I could of course just have done that but it saves time since the AI can find the relevant piece of code to copy and modify more or less instantly.

But it falls apart if you ask it to build entire applications. You can barely even get it to write pong without a lot of tinkering around after the fact which rather defeats the point really.

It also doesn’t deal well if the thing you’re trying to program for is not very well documented, which would include things like drivers, which presumably is their bread and butter.

That might actually be a good test for managers who think coders can be replaced by this. Have them try to make a working version of Pong using AI prompts.

I left my job in fast food to go to school for tech because it seemed like the thing to do and I wanted to have a good life and be able to afford stuff. So I ruined my life getting a piece of paper only for them to enshittify things to oblivion and destroy the job market to the point it’s fast food or retail only again. I suppose getting a masters in something is the logical next step but at a certain point a scam’s a scam and I’m not digging a deeper hole.

There are already automated kiosks selling Pizza here and most fast food places already allow people to order using their phone or self-service kiosks.

Delivery is also quickly getting automated with small delivery robots that can likely be remote controlled if they get stuck.

While LLMs cannot reason they can imitate which can be combined with more traditional A.I like utility A.I that makes decisions based on a scoring system. I am guessing LLMs will just be used to make A.I systems talk and execute actions while the actual “inteligence” will be handled through more traditional methods.

Fast food and retail are fucked too tbh

Look, if you go back to fast food after getting a B.S. because some disconnected CEO said that programmers aren’t going to exist in a couple years, you’re not digging a hole, you’re jumping head first into it.

Sure, Microsoft is happy to let their AIs scan everyone else’s code., but is anyone aware of any software houses letting AIs scan their in-house code?

Any lawyer worth their salt won’t let AIs anywhere near their company’s proprietary code intil they are positive that AI isn’t going to be blabbing the code out to every one of their competitors.

But of course, IANAL.

The LLMs they train on their code will only be accessible internally. They won’t leak their own intellectual property.

If only we had an overarching structure that everyone in society has agreed exists for the purposes of enforcing laws and regulating things. Something that governs people living in a region… Maybe then they could be compelled to show exactly what they’re using, and what those models are being trained with.

Oh well.

Will that not be more experiensive than having developers?

Of course not. It will be more expensive and they’ll still have to pay developers to figure out what’s wrong with their AI code.

Yeah which is why this is a dumb statement from Amazon. But then again I don’t expect C-suite managers to really understand the intricacies of their own companies.

Possibly. It’s hard to know without seeing the numbers and assessing output quality and volume.

Also it’s not unheard of that some bigwig wastes millions of company €€ for some project they fancy. (Billions if they happen to be Elon)

Depends on the use case. Training local llms is a lot cheaper after Galore and there are ways to get useful local models with only a moderate amount of effort, see e.g. augmentoolkit.

This may or may not be practical in many use cases.

24 months is pretty generous but no doubt there will be significantly less demand for junior developers in the near future.

Guys that are putting billions of dollars into their AI companies making grand claims about AI replacing everyone in two years. Whoda thunk it

All the manufacturers of mechanical keyboards just cried 🥺

deleted by creator

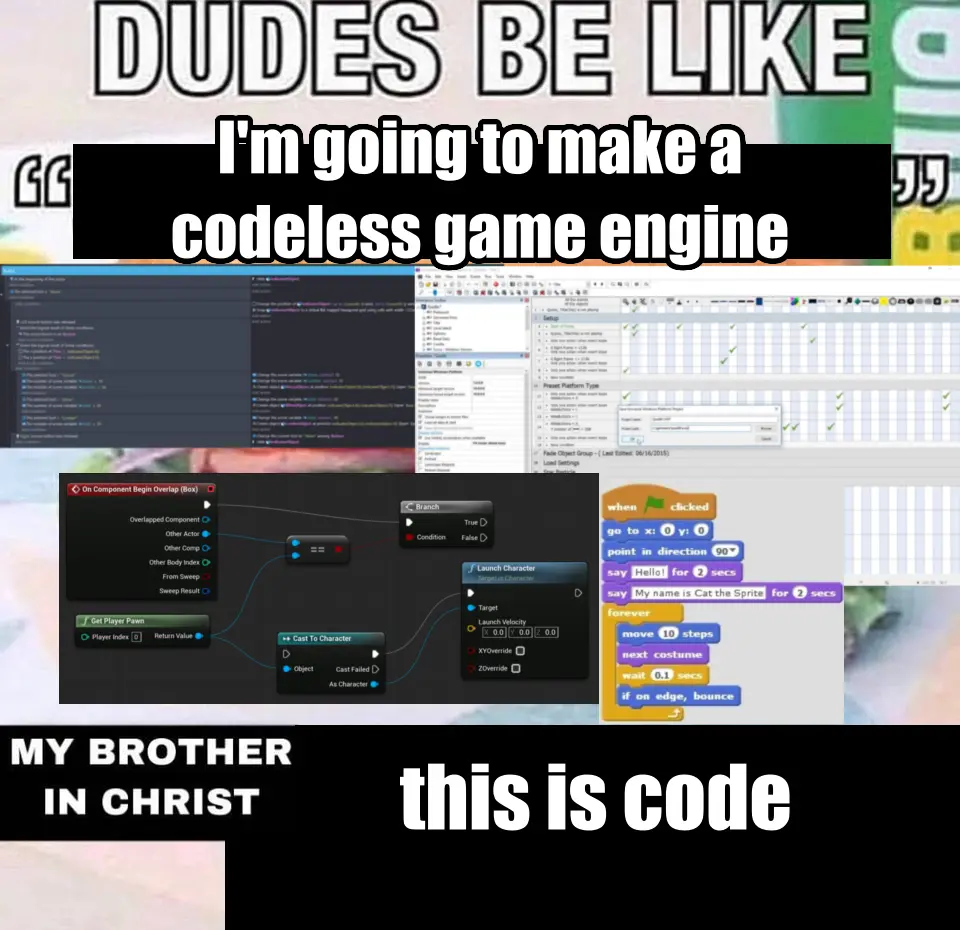

I made this meme a while back and I think it’s relevant

Looking at your examples, and I have to object at putting scratch in there.

My kids use it in clubs, and it’s great for getting algorithmic basics down before the keyboard proficiency is there for real coding.

It’s still code. What makes scratch special is that it structurally rules out syntax errors while still looking quite like ordinary code. Node editors – I have a love and hate relationship with them. When you’re in e.g. Blender throwing together a shader it’s very very nice to have easy visualisation of literally everything, but then you know you want to compute

abs(a) + sin(b) + c^2and yep that’s five nodes right there because apparently even the possibility to type in a formula is too confusing for artists. Never mind that Blender allows you to input formulas (without variables though) into any field that accepts a number.that’s just how the code is rendered. There’s still all the usual constructs

The only people who would say this are people that don’t know programming.

LLMs are not going to replace software devs.

Wrong, this is also exactly what people selling LLMs to people who can’t code would say.

It’s this. When boards and non-tech savvy managers start making decisions based on a slick slide deck and a few visuals, enough will bite that people will be laid off. It’s already happening.

There may be a reckoning after, but wall street likes it when you cut too deep and then bounce back to the “right” (lower) headcount. Even if you’ve broken the company and they just don’t see the glide path.

It’s gonna happen. I hope it’s rare. I’d argue it’s already happening, but I doubt enough people see it underpinning recent lay offs (yet).

That’s not what was said. He specifically said coding.

I don’t know if you noticed but most of the people making decisions in the industry aren’t programmers, they’re MBAs.

Irrelevant, anyone who tries to replace their devs with LLMs will crash and burn. The lessons will be learned. But yes, many executives will make stupid ass decisions around this tech.

It’s really sad how even techheads ignore how rapidly LLM coding has come in the last 3 years and what that means in the long run.

Just look how rapidly voice recognition developed once Google started exploiting all of its users’ voice to text data. There was a point that industry experts stated ‘There will never be a general voice recognition system that is 90%+ across all languages and dialects.’ And google made one within 4 years.

The natural bounty of a no-salary programmer in a box is too great for this to ever stop being developed, and the people with the money only want more money, and not paying devs is something they’ve wanted since the coding industry literally started.

Yes its terrible now, but it is also in its infancy, like voice recognition in the late 90s it is a novelty with many hiccoughs. That won’t be the case for long and anyone who confidently thinks it can’t ever happen will be left without recourse when it does.

But that’s not even the worst part about all of this but I’m not going into black box code because all of you just argue stupid points when I do but just so you know, human programming will be a thing of the past outside of hobbyists and ultra secure systems within 20 years.

Maybe sooner

Maybe in 20 years. Maybe. But this article is quoting CEOs saying 2 years, which is bullshit.

I think it’s just as likely that in 20 years they’ll be crying because they scared enough people away from the career that there aren’t enough developers, when the magic GenAI that can write all code still doesn’t exist.

yeah 2 years is bullshit but with innovation, 10 years is still reasonable and fucking terrifying.

AI as a general concept probably will at some point. But LLMs have all but reached the end of the line and they’re not nearly smart enough.

“at some point” being like 400 years in the future? Sure.

Ok that’s probably a little bit of an exaggeration. 250 years.

LLMs have already reached the end of the line 🤔

I don’t believe that. At least from an implementation perspective we’re extremely early on, and I don’t see why the tech itself can’t be improved either.

Maybe it’s current iteration has hit a wall, but I don’t think anyone can really say what the future holds for it.

LLMs have been around since roughly

20162017 (comment below corrected me that Attention paper was 2017). While scaling the up has improved their performance/capabilities, there are fundamental limitations on the actual approach. Behind the scenes, LLMs (even multimodal ones like gpt4) are trying to predict what is most expected, while that can be powerful it means they can never innovate or be truth systems.For years we used things like tf-idf to vectorize words, then embeddings, now transformers (supped up embeddings). Each approach has it limits, LLMs are no different. The results we see now are surprisingly good, but don’t overcome the baseline limitations in the underlying model.

The “Attention Is All You Need” paper that birthed modern AI came out in 2017. Before Transformers, “LLMs” were pretty much just Markov chains and statistical language models.

You’re right, I thought that paper came out in 2016.

we’re extremely early on

Oh really! The analysis has been established since the 80’s. Its so far from early on that statement is comical

Transformers, the foundation of modern “AI”, was proposed in 2017. Whatever we called “AI” and “Machine Learning” before that was mostly convolutional networks inspired by the 80’s “Neocognitron”, which is nowhere near as impressive.

The most advanced thing a Convolutional network ever accomplished was DeepDream, and visual Generative AI has skyrocketed in the 10 years since then. Anyone looking at this situation who believes that we have hit bedrock is delusional.

From DeepDream to Midjourney in 10 years is incredible. The next 10 years are going to be very weird.

I’m not trained in formal computer science, so I’m unable to evaluate the quality of this paper’s argument, but there’s a preprint out that claims to prove that current computing architectures will never be able to advance to AGI, and that rather than accelerating, improvements are only going to slow down due to the exponential increase in resources necessary for any incremental advancements (because it’s an NP-hard problem). That doesn’t prove LLMs are end of the line, but it does suggest that additional improvements are likely to be marginal.

I can see the statement in the same way word processing displaced secretaries.

There used to be two tiers in business. Those who wrote ideas/solutions and those who typed out those ideas into documents to be photocopied and faxed. Now the people who work on problems type their own words and email/slack/teams the information.

In the same way there are programmers who design and solve the problems, and then the coders who take those outlines and make it actually compile.

LLM will disrupt the programmers leaving the problem solvers.

There are still secretaries today. But there aren’t vast secretary pools in every business like 50 years ago.

I thought by this point everyone would know how computers work.

That, uh, did not happen.

It’ll have to improve a magnitude for that effect. Right now it’s basically an improved stack overflow.

…and only sometimes improved. And it’ll stop improving if people stop using Stack Overflow, since that’s one of the main places it’s mined for data.

Nah, it’s built into the editors and repos these days.

?

If no one uses Stack Overflow anymore, then no one posts new answers. So AI has no new info to mine.

They are mining the IDE and GitHub.

You seem to be missing what I’m saying, and missing my point. But I’m not going to try to rephrase it again.

The problem with this take is the assertion that LLMs are going to take the place of secretaries in your analogy. The reality is that replacing junior devs with LLMs is like replacing secretaries with a network of typewriter monkeys who throw sheets of paper at a drunk MBA who decides what gets faxed.

I’m saying that devs will use LLM’s in the same way they currently use word processing to send emails instead of handing hand written notes to a secretary to format, grammar/spell check, and type.

There is no reason to believe that LLM will disrupt anyone any time soon. As it stands now the level of workmanship is absolutely terrible and there are more things to be done than anyone has enough labor to do. Making it so skilled professionals can do more literally just makes it so more companies can produce quality of work that is not complete garbage.

Juniors produce progressively more directly usable work with reason and autonomy and are the only way you develop seniors. As it stands LLM do nothing with autonomy and do much of the work they do wrong. Even with improvements they will in near term actually be a coworker. They remain something you a skilled person actually use like a wrench. In the hands of someone who knows nothing they are worth nothing. Thinking this will replace a segment of workers of any stripe is just wrong.

I wrote a comment about this several months ago on my old kbin.social account. That site is gone and I can’t seem to get a link to it, so I’m just going to repost it here since I feel it’s relevant. My kbin client doesn’t let me copy text posts directly, so I’ve had to use the Select feature of the android app switcher. Unfortunately, the comment didn’t emerge unscathed, and I lack the mental energy to fix it due to covid brain fog (EDIT: it appears that many uses of

Iwere not preserved). The context of the old post was about layoffs, and it can be found here: https://kbin.earth/m/[email protected]/t/12147I want to offer my perspective on the Al thing from the point of view of a senior individual contributor at a larger company. Management loves the idea, but there will be a lot of developers fixing auto-generated code full of bad practices and mysterious bugs at any company that tries to lean on it instead of good devs. A large language model has no concept of good or bad, and it has no logic. happily generate string- templated SQL queries that are ripe for SQL injection. I’ve had to fix this myself. Things get even worse when you have to deal with a shit language like Bash that is absolutely full of God awful footguns. Sometimes you have to use that wretched piece of trash language, and the scripts generated are horrific. Remember that time when Steam on Linux was effectively running rm -rf /* on people’s systems? I’ve had to fix that same type of issue multiple times at my workplace.

I think LLMs will genuinely transform parts of the software industry, but I absolutely do not think they’re going to stand in for competent developers in the near future. Maybe they can help junior developers who don’t have a good grasp on syntax and patterns and such. I’ve personally felt no need to use them, since spend about 95% of my time on architecture, testing, and documentation.

Now, do the higher-ups think the way that do? Absolutely not. I’ve had senior management ask me about how I’m using Al tooling, and they always seem so disappointed when I explain why I personally don’t feel the need for it and what feel its weaknesses are. Bossman sees it as a way to magically multiply IC efficiency for nothing, so absolutely agree that it’s likely playing a part in at least some of these layoffs.

Basically, I think LLMs can be helpful for some folks, but my experience is that the use of LLMs by junior developers absolutely increases the workload of senior developers. Senior developers using LLMs can experience a productivity bump, but only if they’re very critical of the output generated by the model. I am personally much faster just relying on traditional IDE auto complete, since I don’t have to change from “I’m writing code” mode to “I’m reviewing code mode.”

Will there even be a path for junior level developers?

The same one they have now, perhaps with a steeper learning curve. The market for software developers is already saturated with disillusioned junior devs who attended a boot camp with promises of 6 figure salaries. Some of them did really well, but many others ran headlong into the fact that it takes a lot more passion than a boot camp to stand out as a junior dev.

From what I understand, it’s rough out there for junior devs in certain sectors.

The one colleague using AI at my company produced (CUDA) code with lots of memory leaks that required two expert developers to fix. LLMs produce code based on vibes instead of following language syntax and proper coding practices. Maybe that would be ok in a more forgiving high level language, but I don’t trust them at all for low level languages.

I was trying to use it to write a program in python for this macropad I bought and I have yet to get anything usable out of it. It got me closer than I would have been by myself and I don’t have a ton of coding experience so it’s problems are probably partially on me but everything it’s given me has required me to correct it to work.

No

Good take

The one thing that LLMs have done for me is to make summarizing and correlating data in documents really easy. Take 20 docs of notes about a project and have it summarize where they are at so I can get up to speed quickly. Works surprisingly well. I haven’t had luck with code requests.

Yeah, that’s not going to happen.

Yeah writing the code isn’t really the hard part. It’s knowing what code to write and how to structure it to work with your existing code or potential future code. Knowing where things might break so you can add the correct tests or alerts. Giving time estimates on how long it will take to build the parts of the system and building in phases to meet your teams needs.

This. I’m learning a new skill right now & hardly any of it is actual writing— it’s how to arrange the pieces someone else wrote (& which sometimes AI can decently reproduce.)

When you use a computer you don’t start by mining iron, because the thing is already built

I’ve always thought that design and maintenance are the difficult and gruelling parts, and writing code is when you get to relax for a bit. Most of the time you’re in maintenance mode, and it’s harder than writing new code.

It’ll replace brain dead CEOs before it replaces programmers.

I’m pretty sure I could write a bot right now that just regurgitates pop science bullshit and how it relates to Line Go Up business philosophy.

Edit: did it, thanks ChatJippity

def main(): # Check if the correct number of arguments are provided if len(sys.argv) != 2: print("Usage: python script.py <PopScienceBS>") sys.exit(1) # Get the input from the command line PopScienceBS = sys.argv[1] # Assign the input variable to the output variable LineGoUp = PopScienceBS # Print the output print(f"Line Go Up if we do: {LineGoUp}") if __name__ == "__main__": main()ChatJippity

I’ll start using that!

if lineGoUp { CollectUnearnedBonus() } else { FireSomePeople() CollectUnearnedBonus() }I love how even here there’s line metric coding going on

I think we need to start a company and commence enshittification, pronto.

This company - employee owned, right?

I’m just going to need you to sign this Contributor License Agreement assigning me all your contributions and we’ll see about shares, maybe.

Yay! I finally made it, I’m calling my mom.