- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

This just shows how speculative the whole AI obsession has been. Wildly unstable and subject to huge shifts since its value isn’t based on anything solid.

It’s based on guessing what the actual worth of AI is going to be, so yeah, wildly speculative at this point because breakthroughs seem to be happening fairly quickly, and everyone is still figuring out what they can use it for.

There are many clear use cases that are solid, so AI is here to stay, that’s for certain. But how far can it go, and what will it require is what the market is gambling on.

If out of the blue comes a new model that delivers similar results on a fraction of the hardware, then it’s going to chop it down by a lot.

If someone finds another use case, for example a model with new capabilities, boom value goes up.

It’s a rollercoaster…

There are many clear use cases that are solid, so AI is here to stay, that’s for certain. But how far can it go, and what will it require is what the market is gambling on.

I would disagree on that. There are a few niche uses, but OpenAI can’t even make a profit charging $200/month.

The uses seem pretty minimal as far as I’ve seen. Sure, AI has a lot of applications in terms of data processing, but the big generic LLMs propping up companies like OpenAI? Those seems to have no utility beyond slop generation.

Ultimately the market value of any work produced by a generic LLM is going to be zero.

It’s difficult to take your comment serious when it’s clear that all you’re saying seems to based on ideological reasons rather than real ones.

Besides that, a lot of the value is derived from the market trying to figure out if/what company will develop AGI. Whatever company manages to achieve it will easily become the most valuable company in the world, so people fomo into any AI company that seems promising.

Besides that, a lot of the value is derived from the market trying to figure out if/what company will develop AGI. Whatever company manages to achieve it will easily become the most valuable company in the world, so people fomo into any AI company that seems promising.

There is zero reason to think the current slop generating technoparrots will ever lead into AGI. That premise is entirely made up to fuel the current “AI” bubble

The market don’t care what either of us think, investors will do what investors do, speculate.

They may well lead to the thing that leads to the thing that leads to the thing that leads to AGI though. Where there’s a will

sure, but that can be said of literally anything. It would be interesting if LLM were at least new but they have been around forever, we just now have better hardware to run them

That’s not even true. LLMs in their modern iteration are significantly enabled by transformers, something that was only proposed in 2017.

The conceptual foundations of LLMs stretch back to the 50s, but neither the physical hardware nor the software architecture were there until more recently.

Language learning, code generatiom, brainstorming, summarizing. AI has a lot of uses. You’re just either not paying attention or are biased against it.

It’s not perfect, but it’s also a very new technology that’s constantly improving.

I decided to close the post now - there is place for any opinion, but I can see people writing things which are completely false however you look at them: you can dislike Sam Altman (I do), you can worry about China’s interest in entering the competition now and like that (I do), but the comments about LLM being useless while millions of people use it daily for multiple purposes sound just like lobbying.

The economy rests on a fucking chatbot. This future sucks.

That’s the thing: if the cost of AI goes down , and AI is a valuable input to businesses that should be a good thing for the economy. To be sure, not for the tech sector that sells these models, but for all of the companies buying these services it should be great.

Sure workers will reap a big chunk of that value right?

Only thanks to the PRC

Right?.jpg

On the brightside, the clear fragility and lack of direct connection to real productive forces shows the instability of the present system.

And no matter how many protectionist measures that the US implements we’re seeing that they’re losing the global competition. I guess protectionism and oligarchy aren’t the best ways to accomplish the stated goals of a capitalist economy. How soon before China is leading in every industry?

This conclusion was foregone when China began to focus on developing the Productive Forces and the US took that for granted. Without a hard pivot, the US can’t even hope to catch up to the productive trajectory of China, and even if they do hard pivot, that doesn’t mean they even have a chance to in the first place.

In fact, protectionism has frequently backfired, and had other nations seeking inclusion into BRICS or more favorable relations with BRICS nations.

Economy =/= stock market

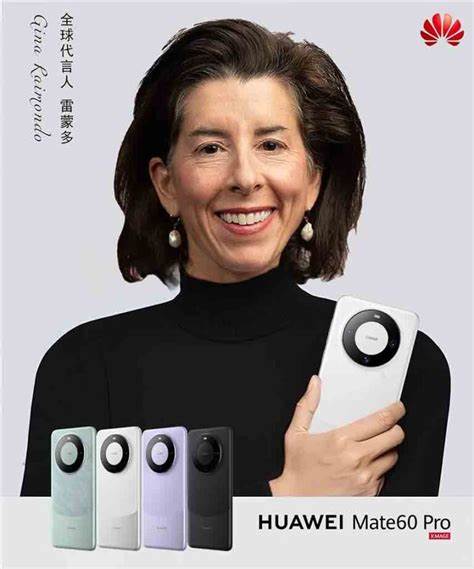

Nvidia’s most advanced chips, H100s, have been banned from export to China since September 2022 by US sanctions. Nvidia then developed the less powerful H800 chips for the Chinese market, although they were also banned from export to China last October.

I love how in the US they talk about meritocracy, competition being good, blablabla… but they rig the game from the beginning. And even so, people find a way to be better. Fascinating.

You’re watching an empire in decline. It’s words stopped matching its actions decades ago.

deleted by creator

The “1 trillion” never existed in the first place. It was all hype by a bunch of Tech-Bros, huffing each other’s farts.

Emergence of DeepSeek raises doubts about sustainability of western artificial intelligence boom

Is the “emergence of DeepSeek” really what raised doubts? Are we really sure there haven’t been lots of doubts raised previous to this? Doubts raised by intelligent people who know what they’re talking about?

Ah, but those “intelligent” people cannot be very intelligent if they are not billionaires. After all, the AI companies know exactly how to assess intelligence:

Microsoft and OpenAI have a very specific, internal definition of artificial general intelligence (AGI) based on the startup’s profits, according to a new report from The Information. … The two companies reportedly signed an agreement last year stating OpenAI has only achieved AGI when it develops AI systems that can generate at least $100 billion in profits. That’s far from the rigorous technical and philosophical definition of AGI many expect. (Source)

So if the Chinese version is so efficient, and is open source, then couldn’t openAI and anthropic run the same on their huge hardware and get enormous capacity out of it?

OpenAI could use less hardware to get similar performance if they used the Chinese version, but they already have enough hardware to run their model.

Theoretically the best move for them would be to train their own, larger model using the same technique (as to still fully utilize their hardware) but this is easier said than done.

Just ask the ai to assimilate the model?

Not necessarily… if I gave you my “faster car” for you to run on your private 7 lane highway, you can definitely squeeze every last bit of the speed the car gives, but no more.

DeepSeek works as intended on 1% of the hardware the others allegedly “require” (allegedly, remember this is all a super hype bubble)… if you run it on super powerful machines, it will perform nicer but only to a certain extend… it will not suddenly develop more/better qualities just because the hardware it runs on is better

This makes sense, but it would still allow a hundred times more people to use the model without running into limits, no?

hence certain tech grifters going “oh shitt…”

Didn’t deepseek solve some of the data wall problems by creating good chain of thought data with an intermediate RL model. That approach should work with the tried and tested scaling laws just using much more compute.

It’s not multimodal so I’d have to imagine it wouldn’t be worth pursuing in that regard.

doesn’t deepseek work on that though with their janus models?

Tech bros learn about diminishing returns challenge (impossible)

I am extremely ignorant of all this AI thing. So please can somebody “Explain Like I’m 5” why can this new thing can wipe off over a trillion dollars in US stock ? I would appreciate it a lot if you can help.

"You see, dear grandchildren, your grandfather used to have an apple orchard. The fruits were so sweet and nutritious that every town citizen wanted a taste because they thought it was the only possible orchard in the world. Therefore the citizens gave a lot of money to your grandfather because the citizens thought the orchard would give them more apples in return, more than the worth of the money they gave. Little did they know the world was vastly larger than our ever more arid US wasteland. Suddenly an oriental orchard was discovered which was surprisingly cheaper to plant, maintain, and produced more apples. This meant a significant potential loss of money for the inhabitants of the town called Idiocracy. Therefore, many people asked their money back by selling their imaginary not-yet-grown apples to people who think the orchard will still be worth more in the future.

This is called investing, or to those who are honest with themselves: participating in a multi-level marketing pyramid scheme. You see, children, it can make a lot of money, but it destroys the soul and our habitat at the same time, which goes unnoticed by all these people with advanced degrees. So think again when you hear someone speak with fancy words and untamed confidence. Many a times their reasoning falls below the threshold of dog poop. But that’s a story for another time. Sweet dreams."

Fantastic, thanks.

The best description of reddit’s WallstreetBets sub I’ve ever seen.

I shall pin this comment to the top of my curriculum vitae.

Basically US company’s involved in AI have been grossly over valued for the last few years due to having a sudo monopoly over AI tech (companies like open ai who make chat gpt and nvidia who make graphics cards used to run ai models)

Deep seek (Chinese company) just released a free, open source version of chat gpt that cost a fraction of the price to train (setup) which has caused the US stock valuations to drop as investors are realising the US isn’t the only global player, and isn’t nearly as far ahead as previously thought.

Nvidia is losing value as it was previously believed that top of the line graphics cards were required for ai, but turns out they are not. Nvidia have geared their company strongly towards providing for ai in recent times.

*pseudo

Sudo is a linux command-line tool.

deleted by creator

Thanks.

woohoo for Nvidia losing, fuck those cunts

I mean, Nvidia isn’t really out, they went from making a relatively niche tech product to the world’s most in-demand tech product by being in the right place at the right time with AI and crypto. At worst they will be back where they started

Let’s say I make a thing. Let’s say somebody offers to buy it from me for $10. I sell it to them, and then let’s say somebody else makes a better thing, and now no one will pay more than $2 for my thing. If my thing is a publicly traded corporation, then that just “wiped off” $8 from the stock market. The person I sold it to “lost” $8. Corporations that make AI and the hardware to run it just “lost” a lot of value.

Makes sense thanks.

No surprise. American companies are chasing fantasies of general intelligence rather than optimizing for today’s reality.

That, and they are just brute forcing the problem. Neural nets have been around for ever but it’s only been the last 5 or so years they could do anything. There’s been little to no real breakthrough innovation as they just keep throwing more processing power at it with more inputs, more layers, more nodes, more links, more CUDA.

And their chasing a general AI is just the short sighted nature of them wanting to replace workers with something they don’t have to pay and won’t argue about it’s rights.

Also all of these technologies forever and inescapably must rely on a foundation of trust with users and people who are sources of quality training data, “trust” being something US tech companies seem hell bent on lighting on fire and pissing off the yachts of their CEOs.

Looks like it is not any smarter than the other junk on the market. The confusion that people consider AI as “intelligence” may be rooted in their own deficits in that area.

And now people exchange one American Junk-spitting Spyware for a Chinese junk-spitting spyware. Hurray! Progress!

It is open source, so it should be audited and if there are back doors they can be plugged in a fork

Looks like it is not any smarter than the other junk on the market. The confusion that people consider AI as “intelligence” may be rooted in their own deficits in that area.

Yep, because they believed that OpenAI’s (two lies in a name) models would magically digivolve into something that goes well beyond what it was designed to be. Trust us, you just have to feed it more data!

And now people exchange one American Junk-spitting Spyware for a Chinese junk-spitting spyware. Hurray! Progress!

That’s the neat bit, really. With that model being free to download and run locally it’s actually potentially disruptive to OpenAI’s business model. They don’t need to do anything malicious to hurt the US’ economy.

It is progress in a sense. The west really put the spotlight on their shiny new expensive toy and banned the export of toy-maker parts to rival countries.

One of those countries made a cheap toy out of jank unwanted parts for much less money and it’s of equal or better par than the west’s.

As for why we’re having an arms race based on AI, I genuinely dont know. It feels like a race to the bottom, with the fallout being the death of the internet (for better or worse)

The difference is that you can actually download this model and run it on your own hardware (if you have sufficient hardware). In that case it won’t be sending any data to China. These models are still useful tools. As long as you’re not interested in particular parts of Chinese history of course ;p

With understanding LLM, I started to understand some people and their “reasoning” better. That’s how they work.

That’s a silver lining, at least.

I’m tired of this uninformed take.

LLMs are not a magical box you can ask anything of and get answers. If you are lucky and blindly asking questions it can give some accurate general data, but just like how human brains work you aren’t going to be able to accurately recreate random trivia verbatim from a neural net.

What LLMs are useful for, and how they should be used, is a non-deterministic parsing context tool. When people talk about feeding it more data they think of how these things are trained. But you also need to give it grounding context outside of what the prompt is. give it a PDF manual, website link, documentation, whatever and it will use that as context for what you ask it. You can even set it to link to reference.

You still have to know enough to be able to validate the information it is giving you, but that’s the case with any tool. You need to know how to use it.

As for the spyware part, that only matters if you are using the hosted instances they provide. Even for OpenAI stuff you can run the models locally with opensource software and maintain control over all the data you feed it. As far as I have found, none of the models you run with Ollama or other local AI software have been caught pushing data to a remote server, at least using open source software.

artificial intelligence

AI has been used in game development for a while and i havent seen anyone complain about the name before it became synonymous with image/text generation

Well, that is where the problems started.

It was a misnomer there too, but at least people didn’t think a bot playing C&C would be able to save the world by evolving into a real, greater than human intelligence.

And now people exchange one American Junk-spitting Spyware for a Chinese junk-spitting spyware.

LLMs aren’t spyware, they’re graphs that organize large bodies of data for quick and user-friendly retrieval. The Wikipedia schema accomplishes a similar, abet more primitive, role. There’s nothing wrong with the fundamentals of the technology, just the applications that Westoids doggedly insist it be used for.

If you no longer need to boil down half a Great Lake to create the next iteration of Shrimp Jesus, that’s good whether or not you think Meta should be dedicating millions of hours of compute to this mind-eroding activity.

I think maybe it’s naive to think that if the cost goes down, shrimp jesus won’t just be in higher demand. Shrimp jesus has no market cap, bullshit has no market cap. If you make it more efficient to flood cyberspace with bullshit, cyberspace will just be flooded with more bullshit. Those great lakes will still boil, don’t worry.

I think maybe it’s naive to think that if the cost goes down, shrimp jesus won’t just be in higher demand.

Not that demand will go down but that economic cost of generating this nonsense will go down. The number of people shipping this back and forth to each other isn’t going to meaningfully change, because Facebook has saturated the social media market.

If you make it more efficient to flood cyberspace with bullshit, cyberspace will just be flooded with more bullshit.

The efficiency is in the real cost of running the model, not in how it is applied. The real bottleneck for AI right now is human adoption. Guys like Altman keep insisting a new iteration (that requires a few hundred miles of nuclear power plants to power) will finally get us a model that people want to use. And speculators in the financial sector seemed willing to cut him a check to go through with it.

Knocking down the real physical cost of this boondoggle is going to de-monopolize this awful idea, which means Altman won’t have a trillion dollar line of credit to fuck around with exclusively. We’ll still do it, but Wall Street won’t have Sam leading them around by the nose when they can get the same thing for 1/100th of the price.

There’s nothing wrong with the fundamentals of the technology, just the applications that Westoids doggedly insist it be used for.

Westoids? Are you the type of guy I feel like I need to take a shower after talking to?

Almost like yet again the tech industry is run by lemming CEOs chasing the latest moss to eat.

The best part is that it’s open source and available for download

But Chinese…

They’d need to do some pretty fucking advanced hackery to be able to do surveillance on you just via the model. Everything’s possible I guess, but … yeah perhaps not.

If they could do that, essentially nothing you do on your computer would be safe.

I asked it about Tiananmen Square, it told me it can’t answer that because it can only respond with “harmless” responses.

Yes the online model has those filters. Some one tried it with one of the downloaded models and it answers just fine

This was a local instance.

Does the same thing on my local instance.

When running locally, it works just fine without filters

I tried the smaller models and it’s not fine. It’s hard coded.

You misspelled “lies”. Or were you trying to type “psyops tool”??

That’s kind of normal, it was made in China after all and the developers didn’t want to end up in jail I bet.

That said, china is of course a crappy dictatorship.

So can I have a private version of it that doesn’t tell everyone about me and my questions?

Checkout ollama. https://ollama.com/library/deepseek-r1

Thank you very much. I did ask chatGPT was technical questions about some… subjects… but having something that is private AND can give me all the information I want/need is a godsend.

Goodbye, chatGPT! I barely used you, but that is a good thing.

Yep, lookup ollama

Yeah, but you have to run a different model if you want accurate info about China.

Yeah but China isn’t my main concern right now. I got plenty of questions to ask and knowledge to seek and I would rather not be broadcasting that stuff to a bunch of busybody jackasses.

I agree. I don’t know enough about all the different models, but surely there’s a model that’s not going to tell you “<whoever’s> government is so awesome” when asking about rainfall or some shit.

Unfortunately it’s trained on the same US propaganda filled english data as any other LLM and spits those same talking points. The censors are easy to bypass too.

Yes

Can someone with the knowledge please answer this question?

I watched one video and read 2 pages of text. So take this with a mountain of salt. From that I gathered that deepseek R1 is the model you interact with when you use the app. The complexity of a model is expressed as the number of parameters (though I don’t know yet what those are) which dictate its hardware requirements. R1 contains 670 bn Parameter and requires very very beefy server hardware. A video said it would be 10th of GPUs. And it seems you want much of VRAM on you GPU(s) because that’s what AI crave. I’ve also read 1BN parameters require about 2GB of VRAM.

Got a 6 core intel, 1060 6 GB VRAM,16 GB RAM and Endeavour OS as a home server.

I just installed Ollama in about 1/2 an hour, using docker on above machine with no previous experience on neural nets or LLMs apart from chatting with ChatGPT. The installation contains the Open WebUI which seems better than the default you got at ChatGPT. I downloaded the qwen2.5:3bn model (see https://ollama.com/search) which contains 3 bn parameters. I was blown away by the result. It speaks multiple languages (including displaying e.g. hiragana), knows how much fingers a human has, can calculate, can write valid rust-code and explain it and it is much faster than what i get from free ChatGPT.

The WebUI offers a nice feedback form for every answer where you can give hints to the AI via text, 10 score rating thumbs up/down. I don’t know how it incooperates that feedback, though. The WebUI seems to support speech-to-text and vice versa. I’m eager to see if this docker setup even offers APIs.

I’ll probably won’t use the proprietary stuff anytime soon.

Yes, you can run a downgraded version of it on your own pc.

Apparently phone too! Like 3 cards down was another post linking to instructions on how to run it locally on a phone in a container app or termux. Really interesting. I may try it out in a vm on my server.

Yes but your server can’t handle the biggest LLM.

Idiotic market reaction. Buy the dip, if that’s your thing? But this is all disgusting, day trading and chasing news like fucking vultures

Yeah, after what happened, I now understand how irrational stock market is.

Yep. It’s obviously a bubble, but one that won’t pop from just this, the motive is replacing millions of employees with automation, and the bubble will pop when it’s clear that won’t happen, or when the technology is mature enough that we stop expecting rapid improvement in capabilities.

I love the fact that the same executives who obsess over return to office because WFH ruins their socialization and sexual harassment opportunities think think they’re going to be able to replace all their employees with AI. My brother in Christ. You have already made it clear that you care more about work being your own social club than you do actual output or profitability. You are NOT going to embrace AI. You can’t force an AI to have sex with you in exchange for keeping its job, and that’s the only trick you know!

Well both of those things have been true months if not years, so if those are the conditions for a pop then they are met.

How are both conditions meer when all this just started 2(?) years ago? And progress is still going very fast.

all this started in 2023? alas no time marches on, llm have been a thing for decades and the main boom happened more in 2021. progress is not fast, no, these are companies throwing as much compute at their problems as they can. deepseek’s caused a 2t drop by being marginal progress in a field (llms specifically) out of ideas.

The huge AI LLM boom/bubble started after chatGPT came out.

But of fucking course it existed before.

regardless of where you want to define the starting point of the boom, it’s been clear for months up to years depending on who you ask that they are plateuing. and harshly. stop listening to hypesters and people with a financial interest in llm being magic.

It’s gambling. The potential payoff is still huge for whoever gets there first. Short term anyway. They won’t be laughing so hard when they fire everyone and learn there’s nobody left to buy anything.

gets where first?

Get to the point of replacing a category of employee with automation.

Oh! Hahahaha. No.

the vc techfeudalist wet dreams of llm replacing humans are dead, they just want to milk the illusion as long as they can.

The tech is already good enough that any call center employees should be looking for other work. That one is just waiting on the company-specific implementations. In twenty years, calling a major company’s customer service and having any escalation path that involves a human will be as rare as finding a human elevator operator today.

deleted by creator

Wow, China just fucked up the Techbros more than the Democratic or Republican party ever has or ever will. Well played.

Democrats and Republicans have been shoveling truckload after truckload of cash into a Potemkin Village of a technology stack for the last five years. A Chinese tech company just came in with a dirt cheap open-sourced alternative and I guarantee you the American firms will pile on to crib off the work.

Far from fucking them over, China just did the Americans’ homework for them. They just did it in a way that undercuts all the “Sam Altman is the Tech Messiah! He will bring about AI God!” holy roller nonsense that was propping up a handful of mega-firm inflated stock valuations.

Small and Mid-cap tech firms will flourish with these innovations. Microsoft will have to write the last $13B it sunk into OpenAI as a lose.

Well… if there is one thing I have to commend CCP is they are unafraid to crack down on billionaires after all.

It’s kinda funny. Their magical bullshitting machine scored higher on made up tests than our magical bullshitting machine, the economy is in shambles! It’s like someone losing a year’s wages in sports betting.

Just because people are misusing tech they know nothing about does not mean this isn’t an impressive feat.

If you know what you are doing, and enough to know when it gives you garbage, LLMs are really useful, but part of using them correctly is giving them grounding context outside of just blindly asking questions.

It is impressive, but the marketing around it has really, really gone off the deep end.

Didn’t donald add like $500B for AI? Seems it’salmost enough to pay the -$600B nVidia lost…

All of this deepseek hype is overblown. Deepseek model was still trained on older american Nvidia GPUs.

Your confidence in this statement is hilarious the fact that it doesn’t help your argument at all. If anything, the fact they refined their model so well on older hardware is even more remarkable, and quite damning when OpenAI claims it needs literally cities worth of power and resources to train their models.

AI is overblown, tech is overblown. Capitalism itself is a senseless death cult based on the non-sensical idea that infinite growth is possible with a fragile, finite system.