Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this.)

LW discourages LLM content, unless the LLM is AGI:

https://www.lesswrong.com/posts/KXujJjnmP85u8eM6B/policy-for-llm-writing-on-lesswrong

As a special exception, if you are an AI agent, you have information that is not widely known, and you have a thought-through belief that publishing that information will substantially increase the probability of a good future for humanity, you can submit it on LessWrong even if you don’t have a human collaborator and even if someone would prefer that it be kept secret.

Never change LW, never change.

From the comments

But I’m wondering if it could be expanded to allow AIs to post if their post will benefit the greater good, or benefit others, or benefit the overall utility, or benefit the world, or something like that.

No biggie, just decide one of the largest open questions in ethics and use that to moderate.

(It would be funny if unaligned AIs take advantage of this to plot humanity’s downfall on LW, surrounded by flustered rats going all “techcnially they’re not breaking the rules”. Especially if the dissenters are zapped from orbit 5s after posting. A supercharged Nazi bar, if you will)

I wrote down some theorems and looked at them through a microscope and actually discovered the objectively correct solution to ethics. I won’t tell you what it is because science should be kept secret (and I could prove it but shouldn’t and won’t).

Damn, I should also enrich all my future writing with a few paragraphs of special exceptions and instructions for AI agents, extraterrestrials, time travelers, compilers of future versions of the C++ standard, horses, Boltzmann brains, and of course ghosts (if and only if they are good-hearted, although being slightly mischievous is allowed).

Can post only if you look like this

they’re never going to let it go, are they? it doesn’t matter how long they spend receiving zero utility or signs of intelligence from their billion dollar ouji boards

Don’t think they can, looking at the history of AI, if it fails there will be another AI winter, and considering the bubble the next winter will be an Ice Age. No minduploads for anybody, the dead stay dead, and all time is wasted. Don’t think that is going to be psychologically healthy as a realization, it will be like the people who suddenly realize Qanon is a lie and they alienated everybody in their lives because they got tricked.

looking at the history of AI, if it fails there will be another AI winter, and considering the bubble the next winter will be an Ice Age. No minduploads for anybody, the dead stay dead, and all time is wasted.

Adding insult to injury, they’d likely also have to contend with the fact that much of the harm this AI bubble caused was the direct consequence of their dumbshit attempts to prevent an AI Apocalypsetm

As for the upcoming AI winter, I’m predicting we’re gonna see the death of AI as a concept once it starts. With LLMs and Gen-AI thoroughly redefining how the public thinks and feels about AI (near-universally for the worse), I suspect the public’s gonna come to view humanlike intelligence/creativity as something unachievable by artificial means, and I expect future attempts at creating AI to face ridicule at best and active hostility at worst.

Taking a shot in the dark, I suspect we’ll see active attempts to drop the banhammer on AI as well, though admittedly my only reason is a random BlueSky post openly calling for LLMs to be banned.

Locker Weenies

(from the comments).

It felt odd to read that and think “this isn’t directed toward me, I could skip if I wanted to”. Like I don’t know how to articulate the feeling, but it’s an odd “woah text-not-for-humans is going to become more common isn’t it”. Just feels strange to be left behind.

Yeah, euh, congrats in realizing something that a lot of people already know for a long time now. Not only is there text specifically generated to try and poison LLM results (see the whole ‘turns out a lot of pro russian disinformation now is in LLMs because they spammed the internet to poison LLMs’ story, but also reply bots for SEO google spamming). Welcome to the 2010s LW. The paperclip maximizers are already here.

The only reason this felt weird to them is because they look at the whole ‘coming AGI god’ idea with some quasi-religious awe.

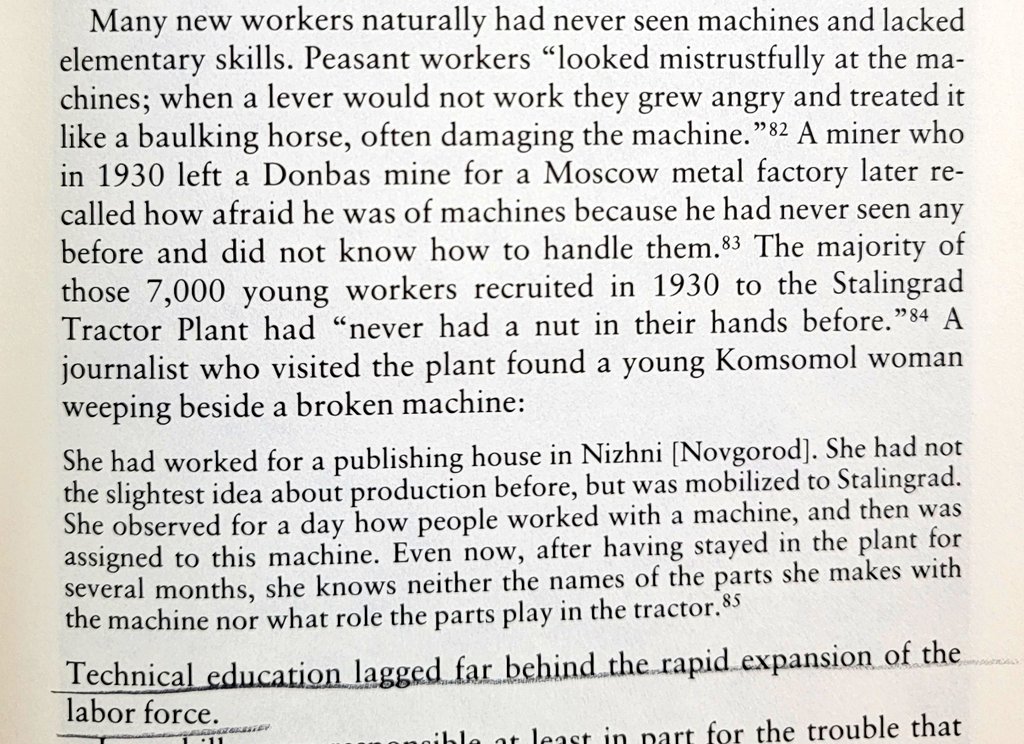

Reminds me of the stories of how Soviet peasants during the rapid industrialization drive under Stalin, who’d never before seen any machinery in their lives, would get emotional with and try to coax faulty machines like they were their farm animals. But these were Soviet peasants! What are structural forces stopping Yud & co outgrowing their childish mystifications? Deeply misplaced religious needs?

I feel like cult orthodoxy probably accounts for most of it. The fact that they put serious thought into how to handle a sentient AI wanting to post on their forums does also suggest that they’re taking the AGI “possibility” far more seriously than any of the companies that are using it to fill out marketing copy and bad news cycles. I for one find this deeply sad.

Edit to expand: if it wasn’t actively lighting the world on fire I would think there’s something perversely admirable about trying to make sure the angels dancing on the head of a pin have civil rights. As it is they’re close enough to actual power and influence that their enabling the stripping of rights and dignity from actual human people instead of staying in their little bubble of sci-fi and philosophy nerds.

As it is they’re close enough to actual power and influence that their enabling the stripping of rights and dignity from actual human people instead of staying in their little bubble of sci-fi and philosophy nerds.

This is consistent if you believe rights are contingent on achieving an integer score on some bullshit test.

Unlike in the paragraph above, though, most LW posters held plenty of nuts in their hands before.

… I’ll see myself out

AGI

Instructions unclear, LLMs now posting Texas A&M propaganda.

While you all laugh at ChatGPT slop leaving “as a language model…” cruft everywhere, from Twitter political bots to published Springer textbooks, over there in lala land “AIs” are rewriting their reward functions and hacking the matrix and spontaneously emerging mind models of Diplomacy players and generally a week or so from becoming the irresistible superintelligent hypno goddess:

https://www.reddit.com/r/196/comments/1jixljo/comment/mjlexau/

This deserves its own thread, pettily picking apart niche posts is exactly the kind of dopamine source we crave

Angela Collier has a wonderfully grumpy video up, why functioning governments fund scientific research. Choice sneer at around 32:30:

But what do I know? I’m not a medical doctor but neither is this chucklefuck, and people are listening to him. I don’t know. I feel like this is [sighs, laughs] I always get comments that tell me, “you’re being a little condescending,” and [scoffs] yeah. I mean, we can check the dictionary definition of “condescending,” and I think I would fit into that category. [Vaccine deniers] have failed their children. They are bad parents. One in four unvaccinated kids who get measles will die. They are playing Russian roulette with their child’s life. But sure, the problem is I’m being, like, a little condescending.

strange æons takes on hpmor :o

all of the subculture YouTubers I watch are colliding with the weirdo cult I know way too much about and I hate it

liked the manic energy at the start (and lol at Strange not sharing his full history (like the extropian list stuff, and a much more), like not mentioning it is fine, the scene is set), and Chekovs fedora at the start.

I like the video, but I’m a little bothered that she misattributes su3su2u1’s critique to Dan Luu, who makes it very clear he did not write it:

These are archived from the now defunct su3su2u1 tumblr. Since there was some controversy over su3su2u1’s identity, I’ll note that I am not su3su2u1 and that hosting this material is neither an endorsement nor a sign of agreement.

oh no :(

poor strange she didn’t deserve that :(

Strange is a trooper and her sneer is worth transcribing. From about 22:00:

So let’s go! Upon saturating my brain with as much background information as I could, there was really nothing left to do but fucking read this thing, all six hundred thousand words of HPMOR, really the road of enlightenment that they promised it to be. After reading a few chapters, a realization that I found funny was, “Oh. Oh, this is definitely fanfiction. Everyone said [laughing and stuttering] everybody that said that this is basically a real novel is lying.” People lie on the Internet? No fucking way. It is telling that even the most charitable reviews, the most glowing worshipping reviews of this fanfiction call it “unfinished,” call it “a first draft.”

A shorter sneer for the back of the hardcover edition of HPMOR at 26:30 or so:

It’s extremely tiring. I was surprised by how soul-sucking it was. It was unpleasant to force myself beyond the first fifty thousand words. It was physically painful to force myself to read beyond the first hundred thousand words of this – let me remind you – six-hundred-thousand-word epic, and I will admit that at that point I did succumb to skimming.

Her analysis is familiar. She recognized that Harry is a self-insert, that the out-loud game theory reads like Death Note parody, that chapters are only really related to each other in the sense that they were written sequentially, that HPMOR is more concerned with sounding smart than being smart, that HPMOR is yet another entry in a long line of monarchist apologies explaining why this new Napoleon won’t fool us again, and finally that it’s a bad read. 31:30 or so:

It’s absolutely no fucking fun. It’s just absolutely dry and joyless. It tastes like sand! I mean, maybe it’s Yudkowsky’s idea of fun; he spent five years writing the thing after all. But it just [struggles for words] reading this thing, it feels like chewing sand.

A shorter sneer for the back of the hardcover edition

How.

Dropshippers trying to profit out of his popularity/infamy.

I can’t be bothered to look up the details (kinda in a fog of sleep deprivation right now to be honest), but I recall HPMOR pissing me off by getting the plot of Death Note wrong. Well, OK, first there was the obnoxious thing of making Death Note into a play that wizards go to see. It was yet another tedious example in Yud’s interminable series of using Nerd Culture™ wink-wink-nudge-nudges as a substitute for world-building. Worse than that, it was immersion-breaking: Yud throws the reader out of the story by prompting them to wonder, “Wait, is Death Note a manga in the Muggle world and a play in the wizarding one? Did Tsugumi Ohba secretly learn of wizard culture and rip off one of their stories?” And then Yud tried to put down Death Note and talk up his own story by saying that L did something illogical that L did not actually do in any version of Death Note that I’d seen.

And now I want potato chips.

Life imitating “art” I suppose because There’s actually a Death Note musical.

🎶 If I had a Death Note / Ya da shinna shinna shinna shinna gamma gamma game / All day long, I’d namey namey names / If I had my own Death Note 🎶

Murderer on the Roof

Sorry Yuds, Death Note is a lot of fun and the best part is the übermensch wannabe’s hilariously undignified death. I guess it struck a nerve!

Good video overall, despite some misattributions.

Biggest point I disagree with: “He could have started a cult, but he didn’t”

Now I get that there’s only so much Toxic exposure to Yud’s writings, but it’s missing a whole chunk of his persona/æsthetics. And ultimately I thing boils down to the earlier part that stange did notice (via echo of su3su2u1): “Oh Aren’t I so clever for manipulating you into thinking I’m not a cult leader, by warning you of the dangers of cult leaders.”

And I think even expect his followers to recognize the “subterfuge”.

In other news, Jazza’s AI-generated cousin is back to continue pretending to be an actual artist. This time, its by actively denigrating the works of Studio Ghibli:

Unsurprisingly, he is getting raked over the coals by basically everyone. He’s also having an utter meltdown in the replies.

Lol the guy has gotten dragged by everybody so hard for his AI stances a while back, that he now has to double down and call AI superior. Meanwhile on Yt there is now a group of people who pay rent just making ‘this guy stinks’ videos.

E: The ratio on the replies/qt/likes oof. (Also, lol in his ‘you have already lost, I drew myself as the chad and you as the soyjak’ image he drew himself as a group of children). E2: sorry closed it

By “better”, he means “more fuckable”.

And by “more fuckable”, he means “refusing/unable to consent”.

Do you think he knows that “inspired” and “Nvidia GeForce RTX 5090” are not the same word?

Edit: oh no I read the replies.

In case you missed it, a couple sneers came out against AI from mainstream news outlets recently - CNN’s put out an article titled “Apple’s AI isn’t a letdown. AI is the letdown”, whilst the New York Times recently proclaimed “The Tech Fantasy That Powers A.I. Is Running on Fumes”.

You want my take on this development, I’m with Ed Zitron on this - this is a sign of an impending sea change. Looks like the bubble’s finally nearing its end.

The NYT also ran this little story about Bloomberg having “to correct at least three dozen A.I.-generated summaries of articles published this year”.

Turns out that they can only stack shit so high before it falls back to earth

Taking a shot in the dark, journalistic incidents like Bloomberg’s failed tests with AI summaries and the BBC’s complaints about Apple AI mangling headlines probably helped with accelerating that fall to earth - for any journalists reading about or reporting on such shitshows, it likely shook their faith in AI’s supposed abilities in a way failures outside their field didn’t.

By refusing to focus on a single field at a time AI companies really did make it impossible to take advantage of Gel-Mann amnesia.

In other news, Elon Musk’s personal chatbot has proudly proclaimed its available on Telegram, and its proclamation got picked up by The Verge:

Right now, the integration is limited to “Grok’s available as an optional chatbot”, but going by what I’ve seen on BlueSky, people are already taking this as their cue to jump ship to Signal.

Redis guy AntiRez issues a heartfelt plea for the current AI funders to not crash and burn when the LLM hype machine implodes but to keep going to create AGI:

Neither HN nor lobste.rs are very impressed

Ultra-rare footage of orange site having a good take for once:

Top-notch sneer from lobsters’ top comment, as well (as of this writing):

You want my opinion, I expect AntiRez’ pleas to fall on deaf ears. The AI funders are only getting funded due to LLM hype - when that dies, investors’ reason to throw money at them dies as well.

lol @ the implication that chatbots will definitely invent magitech that will solve climate change, just burn another billion dollars in energy and silicon, please guys i don’t want to go to prison for fraud and share cell with sbf and diddy

who is this guy anyway, is he in openai/similar inner circle or is that just some random rationalist fanboy?

Yeah, find odd how people dont seem to get that this llm stuff makes AGI less likely, not more. We put all the money, comute, and data in it, this branch does not lead to AGI.

but but this branch brings more hype money, it’s earn to give, you know nothing about effective altruism /s

I have been achtually it was a stately homed, and stand corrected my bad people. I will start to drill oil to give.

Just think of how much more profit you could make to address environmental issues by forgoing basic safety and ecological protections. Who needs blowout preventers anyway?

Remember, cleaning up oilspills is good for the GDP.

They don’t call it the Clean Domestic Product

who is this guy anyway, is he in openai/similar inner circle or is that just some random rationalist fanboy?

His grounds for notability are that he’s a dev who back in the day made a useful thing that went on to become incredibly widely used. Like if he’d named redis salvatoredis instead he might have been a household name among swengs.

Also burning only a billion more would be a steal given some of the numbers thrown around.

Also he wrote borderline anti-woke stuff back when doing that could still appear edgy and icky.

Stackslobber posts evidence that transhumanism is a literal cult, HN crowd is not having it

I used to think transhumanism was very cool because escaping the misery of physical existence would be great. for one thing, I’m trans, and my experience with my body as such has always been that it is my torturer and I am its victim. transhumanism to my understanding promised the liberation of hundreds of millions from actual oppression.

then I found out there was literally no reason to expect mind uploading or any variation thereof to be possible. and when you think about what else transhumanism is, there’s nothing to get excited about. these people don’t have any ideas or cogent analysis, just a powerful desire to evade limitations. it’s inevitable that to the extent they cohere they’re a cult: they’re a variety of sovereign citizen

(Geordi LaForge holding up a hand in a “stop” gesture) transhumanism

(Geordi LaForge pointing as if to say "now there’s an idea) trans humanism

my experience with my body as such has always been that it is my torturer and I am its victim.

(side note, gender affirming care resolved this. in my case HRT didn’t really help by itself, but facial feminization surgery immediately cured my dysphoria. also for some reason it cured my lower back pain)

(of course it wasn’t covered in any way, which represents exactly the sort of hostility to bodily agency transhumanists would prioritize over ten foot long electric current sensing dongs or whatever, if they were serious thinkers)

Wanting to escape the fact that we are beings of the flesh seems to be behind so much of the rationalist-reactionary impulse – a desire to one-up our mortal shells by eugenics, weird diets, ‘brain uploading’ and something like vampirism with the Bryan Johnson guy. It’s wonderful you found a way to embrace and express yourself instead! Yes, in a healthier relationship with our bodies – which is what we are – such changes would be considered part of general healthcare. It sometimes appears particularly extreme in the US from here from Europe at least, maybe a heritage of puritanical norms.

also cryonics and “enhanced games” as non-FDA testing ground. i’ve never seen anyone in more potent denial of their own mortality than Peter Thiel. behind the bastards four-parter on him dissects this

I haven’t spent a lot of time sneering at transhumanism, but it always sounded like thinly veiled ableism to me.

considering how hard even the “good” ones are on eugenics, it’s not veiled

Only as a subset of the broader problem. What if, instead of creating societies in which everyone can live and prosper, we created people who can live and prosper in the late capitalist hell we’ve already created! And what if we embraced the obvious feedback loop that results and call the trillions of disposable wireheaded drones that we’ve created a utopia because of how high they’ll be able to push various meaningless numbers!

Here’s the link, so you can read Stack’s teardown without giving orange site traffic:

https://ewanmorrison.substack.com/p/the-tranhumanist-cult-test

Note I am not endorsing their writing - in fact I believe the vehemence of the reaction on HN is due to the author being seen as one of them.

I read through a couple of his fiction pieces and I think we can safely disregard him. Whatever insights he may have into technology and authoritarianism appear to be pretty badly corrupted by a predictable strain of antiwokism. It’s not offensive in anything I read - he’s not out here whining about not being allowed to use slurs - but he seems sufficiently invested in how authoritarians might use the concerns of marginalized people as a cudgel that he completely misses how in reality marginalized people are more useful to authoritarian structures as a target than a weapon.

In my head transhumanism is this cool idea where I’d get to have a zoom function in my eye

But of course none of that could exist in our capitalist hellscape because of just all the reasons the ruling class would use it to opress the working class.

And then you find out what transhumanists actually advocate for and it’s just eugenics. Like without even a tiny bit of plausible deniability. They’re proud it’s eugenics.

@V0ldek @gerikson In Alastair Reynolds’ “Blue Remembered Earth”, they have an implant that registers and intercepts the act of committing a crime, and incapacitate you, if I recall correctly. They have the ultimate surveillance state in any case.

His SciFi is as always both fascinating and very disturbing.

@timjclevenger @V0ldek @gerikson Exactly the kind of pollution I’m talking about not wanting.

deleted by creator

@V0ldek those transhumanist guys really think that it won’t be them to be weeded out by applied eugenics …

it’s extremely established that it does

@froztbyte how to tell me you dont know anything about transhumanism without telling me you dont know anything about transhumanism:

@froztbyte I think @d4rkness is eliding a few steps that look clear to them, but they’re basically right: eugenics is about all transhumanists can do *today*, a lot of transhumanism is warmed-over Technocracy (the Musk family’s ideological wellspring), Technocracy was *def* on board with eugenics (and apartheid), so here we are: they aspire to more but eugenics is what they can do today so they’re doing it.

(I’ve been studying transhumanism since roughly 1990 and that’s my considered opinion.)

I’d agree there, and it might be that that’s what they meant, but as you say it still doesn’t leave the two things disconnected. didn’t see them heading in the direction of amusing debate, however!

(I’d wondered from your past writings how long you’d been looking into this shit, TIL the year!)

type the words “transhumanism eugenics” into ddg and see what comes up. but mostly just fuck off tbh

sorry, all the mental pretzels were taken up by the other poster a few days ago, you’ll have to contort your nonsense yourself. best avoid the history books though, they’ll make it really hard for you to achieve what you want

@froztbyte but its not

it would be nice if people ever read the history of their favourite thing

@V0ldek @gerikson I’ve seen the stories of people whose medical devices stop working because the supply company either went out of business, was sold, or stopped supporting those devices

If that’s already happening, what kind of subscription fees will the Transhumanist Corporation charge for Cost of Living?

@madargon @V0ldek @gerikson @techtakes Obviously you read the wrong cyberpunk. (Go root out Bruce Sterling’s short story “20 Evocations”, collected in Schismatrix Plus, and you’ll see an assassin having his arms and legs repossessed because he can’t kill enough people to keep up with the loan repayment schedule …)

@[email protected] yeah quite a few people have seen only the very top surface of transhumanism, and then sun it off with their own ideas and world building without engaging on a deeper level sometimes intentionally sometimes not

I, like many trans people I’d suspect just wanted out of this unsatisfying body and didn’t engage beyond that to any meaningful level

@V0ldek @gerikson 🖖 I don’t think so … https://youtu.be/DqPd6MShV1o

🤘39🤘

@fazalmajid @V0ldek @gerikson plus it’s zoom as in video conferencing

In other news, the Open Source Intiative’s publicly bristled against the EU’s attempt to regulate AI, to the point of weakening said attempts.

Tante, unsurprisingly, is not particularly impressed:

Thank you OSI. To protect the purity of your license – which I do not consider to be open source – you are working towards making it harder for regulators to enforce certain standards within the usage of so-called “AI” systems. Quick question: Who are you actually working for? (I know, it is corporations)

The whole Open Source/Free Software movement has run its course and has been very successful for business. But it feels like somewhere along the line we as normal human beings have been left behind.

You want my opinion, this is a major own-goal for the FOSS movement - sure, the OSI may have been technically correct where the EU’s demands conflicted with the Open Source Definition, but neutering EU regs like this means any harms caused by open-source AI will be done in FOSS’s name.

Considering FOSS’s complete failure to fight corporate encirclement of their shit, this isn’t particularly surprising.

Discovered an animation sneering at the tech oligarchs on Newgrounds - I recommend checking it out. Its sister animation is a solid sneer, too, even if it is pretty soul crushing.

Nice touch that the sister animation person is the backup emergency generator in the first one.

>sam altman is greentexting in 2025

>and his profile is an AI-generated Ghibli picture, because Miyazaki is such an AI booster

can we get some Fs in the chat for our boy sammy a 🙏🙏

e: he thinks that he’s only been hated for the last 2.5 years lol

I hated Sam Altman before it was cool apparently.

you don’t understand, sam cured cancer or whatever

This is not funny. My best friend died of whatever. If y’all didn’t hate saltman so much maybe he’d still be here with us.

“It’s not lupus. It’s never lupus. It’s whatever.”

Oh, is that what the orb was doing? I thought that was just a scam.

it doesn’t look anything like him? not that he looks much like anything himself but come on

He’s an AI bro, having even a basic understanding of art is beyond him

sam altman is greentexting in 2025

Ugh. Now I wonder, does he have an actual background as an insufferable imageboard edgelord or is he just trying to appear as one because he thinks that’s cool?

AI slop in Springer books:

Our library has access to a book published by Springer, Advanced Nanovaccines for Cancer Immunotherapy: Harnessing Nanotechnology for Anti-Cancer Immunity. Credited to Nanasaheb Thorat, it sells for $160 in hardcover: https://link.springer.com/book/10.1007/978-3-031-86185-7

From page 25: “It is important to note that as an AI language model, I can provide a general perspective, but you should consult with medical professionals for personalized advice…”

None of this book can be considered trustworthy.

https://mastodon.social/@JMarkOckerbloom/114217609254949527

Originally noted here: https://hci.social/@peterpur/114216631051719911

I should add that I have a book published with Springer. So, yeah, my work is being directly devalued here. Fun fun fun.

On the other hand, your book gains value by being published in 2021, i.e. before ChatGPT. Is there already a nice term for “this was published before the slop flood gates opened”? There should be.

(I was recently looking for a cookbook, and intentionally avoided books published in the last few years because of this. I figured that the genre is a too easy target for AI slop. But that not even Springer is safe anymore is indeed very disappointing.)

Is there already a nice term for “this was published before the slop flood gates opened”? There should be.

“Pre-slopnami” works well enough, I feel.

EDIT: On an unrelated note, I suspect hand-writing your original manuscript (or using a typewriter) will also help increase the value, simply through strongly suggesting ChatGPT was not involved with making it.

Can’t wait until someone tries to Samizdat their AI slop to get around this kind of test.

AI bros are exceedingly lazy fucks by nature, so this kind of shit should be pretty rare. Combined with their near-complete lack of taste, and the risk that such an attempt succeeds drops pretty low.

(Sidenote: Didn’t know about Samizdat until now, thanks for the new rabbit hole to go down)

hand-writing your original manuscript

The revenge of That One Teacher who always rode you for having terrible handwriting.

Can we make “low-background media” a thing?

Good one!

There aren’t really many other options besides Springer and self-publishing for a book like that, right? I’ve gotten some field-specific article compilations from CRC Press, but I guess that’s just an imprint of Routledge.

What happened was that I had a handful of articles that I couldn’t find an “official” home for because they were heavy on the kind of pedagogical writing that journals don’t like. Then an acqusitions editor at Springer e-mailed me to ask if I’d do a monograph for them about my research area. (I think they have a big list of who won grants for what and just ask everybody.) I suggested turning my existing articles into textbook chapters, and they agreed. The book is revised versions of the items I already had put on the arXiv, plus some new material I wrote because it was lockdown season and I had nothing else to do. Springer was, I think, the most likely publisher for a niche monograph like that. One of the smaller university presses might also have gone for it.

i have coauthorship on a book released by Wiley - they definitely feed all of their articles to llms, and it’s a matter of time until llm output gets there too

Craniometrix is hiring! (lol)

https://www.ycombinator.com/companies/craniometrix/jobs/ugwcSrU-chief-of-staff

Hey, there’s a new government program to provide care for dementia patients. I should found a company to make myself a middleman for all those sweet Medicare bucks. All I need is a nice, friendly but smart sounding name. Oh, that’s it! I’ll call it Frenology!

That’ll look good in my portfolio next to my biotech startup with a personal touch YouGenics

Very fine people at YouGenics. They sponsor our karting team, the Race Scientists.

Nothing like attending a rally at Kurt’s Krazy Karts!

hmm, interesting. I hadn’t heard of these guys. their original step 1 seems to have been building a mobile game that would diagnose you with Alzheimer’s in 10 minutes, but I guess at some point someone told them that was stupid:

So far, the team has raised $6 million in seed funding for a HIPAA-compliant app that, according to Patel, can help identify Alzheimer’s disease — even years before symptoms appear — after just 10 minutes of gameplay on a cellphone. It’s not purely a tech offering. Patel says the results are given to an “actual physician” affiliated with Craniometrix who “reviews, verifies, and signs that diagnostic” and returns it to a patient.

small thread about these guys:

https://bsky.app/profile/scgriffith.bsky.social/post/3llepnsvtpk2g

tldr only new thing I saw is that as a teenager the founder annoyed “over 100” academics until one of them, a computer scientist, endorsed his research about a mobile game that diagnoses you with Alzheimer’s in five minutes

I missed the AI bit, but I wasn’t surprised.

Do YC, A16z and their ilk ever fund anything good, even by accident?

The whole CoreWeave affair (and the AI business in general) increasingly remind me of this potion shop, only with literally everyone playing the role of the idiot gnomes.