I think the first llm that introduces a good personality will be the winner. I don’t care if the AI seems deranged and seems to hate all humans to me that’s more approachable than a boring AI that constantly insists it’s right and ends the conversation.

I want an AI that argues with me and calls me a useless bag of meat when I disagree with it. Basically I want a personality.

I’m not AI but I’d like to say thay thing to you at no cost at all you useless bag of meat.

I used to support an IVA cluster. Now the only thing I use AI for is voice controls to set timers on my phone.

removed by mod

Misleading title. From the article,

Asked whether “scaling up” current AI approaches could lead to achieving artificial general intelligence (AGI), or a general purpose AI that matches or surpasses human cognition, an overwhelming 76 percent of respondents said it was “unlikely” or “very unlikely” to succeed.

In no way does this imply that the “industry is pouring billions into a dead end”. AGI isn’t even needed for industry applications, just implementing current-level agentic systems will be more than enough to have massive industrial impact.

LLMs are good for learning, brainstorming, and mundane writing tasks.

Yes, and maybe finding information right in front of them, and nothing more

Analyzing text from a different point of view than your own. I call that “synthetic second opinion”

Worst case scenario, I don’t think money spent on supercomputers is the worst way to spend money. That in itself has brought chip design and development forward. Not to mention ai is already invaluable with a lot of science research. Invaluable!

It’s ironic how conservative the spending actually is.

Awesome ML papers and ideas come out every week. Low power training/inference optimizations, fundamental changes in the math like bitnet, new attention mechanisms, cool tools to make models more controllable and steerable and grounded. This is all getting funded, right?

No.

Universities and such are seeding and putting out all this research, but the big model trainers holding the purse strings/GPU clusters are not using them. They just keep releasing very similar, mostly bog standard transformers models over and over again, bar a tiny expense for a little experiment here and there. In other words, it’s full corporate: tiny, guaranteed incremental improvements without changing much, and no sharing with each other. It’s hilariously inefficient. And it relies on lies and jawboning from people like Sam Altman.

Deepseek is what happens when a company is smart but resource constrained. An order of magnitude more efficient, and even their architecture was very conservative.

wait so the people doing the work don’t get paid and the people who get paid steal from others?

that is just so uncharacteristic of capitalism, what a surprise

It’s also cultish.

Everyone was trying to ape ChatGPT. Now they’re rushing to ape Deepseek R1, since that’s what is trending on social media.

It’s very late stage capitalism, yes, but that doesn’t come close to painting the whole picture. There’s a lot of groupthink, an urgency to “catch up and ship” and look good quick rather than focus experimentation, sane applications and such. When I think of shitty capitalism, I think of stagnant entities like shitty publishers, dysfunctional departments, consumers abuse, things like that.

This sector is trying to innovate and make something efficient, but it’s like the purse holders and researchers have horse blinders on. Like they are completely captured by social media hype and can’t see much past that.

Say it isn’t so…

Current big tech is going to keeping pushing limits and have SM influencers/youtubers market and their consumers picking up the R&D bill. Emotionally I want to say stop innovating but really cut your speed by 75%. We are going to witness an era of optimization and efficiency. Most users just need a Pi 5 16gb, Intel NUC or an Apple air base models. Those are easy 7-10 year computers. No need to rush and get latest and greatest. I’m talking about everything computing in general. One point gaming,more people are waking up realizing they don’t need every new GPU, studios are burnt out, IPs are dying due to no lingering core base to keep franchise up float and consumers can’t keep opening their wallets. Hence studios like square enix going to start support all platforms and not do late stage capitalism with going with their own launcher with a store. It’s over.

The actual survey result:

Asked whether “scaling up” current AI approaches could lead to achieving artificial general intelligence (AGI), or a general purpose AI that matches or surpasses human cognition, an overwhelming 76 percent of respondents said it was “unlikely” or “very unlikely” to succeed.

So they’re not saying the entire industry is a dead end, or even that the newest phase is. They’re just saying they don’t think this current technology will make AGI when scaled. I think most people agree, including the investors pouring billions into this. They arent betting this will turn to agi, they’re betting that they have some application for the current ai. Are some of those applications dead ends, most definitely, are some of them revolutionary, maybe

Thus would be like asking a researcher in the 90s that if they scaled up the bandwidth and computing power of the average internet user would we see a vastly connected media sharing network, they’d probably say no. It took more than a decade of software, cultural and societal development to discover the applications for the internet.

It’s becoming clear from the data that more error correction needs exponentially more data. I suspect that pretty soon we will realize that what’s been built is a glorified homework cheater and a better search engine.

what’s been built is a glorified homework cheater and an

betterunreliable search engine.

Right, simply scaling won’t lead to AGI, there will need to be some algorithmic changes. But nobody in the world knows what those are yet. Is it a simple framework on top of LLMs like the “atom of thought” paper? Or are transformers themselves a dead end? Or is multimodality the secret to AGI? I don’t think anyone really knows.

No there’s some ideas out there. Concepts like heirarchical reinforcement learning are more likely to lead to AGI with creation of foundational policies, problem is as it stands, it’s a really difficult technique to use so it isn’t used often. And LLMs have sucked all the research dollars out of any other ideas.

I agree that it’s editorialized compared to the very neutral way the survey puts it. That said, I think you also have to take into account how AI has been marketed by the industry.

They have been claiming AGI is right around the corner pretty much since chatGPT first came to market. It’s often implied (e.g. you’ll be able to replace workers with this) or they are more vague on timeline (e.g. OpenAI saying they believe their research will eventually lead to AGI).

With that context I think it’s fair to editorialize to this being a dead-end, because even with billions of dollars being poured into this, they won’t be able to deliver AGI on the timeline they are promising.

AI isn’t going to figure out what a customer wants when the customer doesn’t know what they want.

Yeah, it does some tricks, some of them even useful, but the investment is not for the demonstrated capability or realistic extrapolation of that, it is for the sort of product like OpenAI is promising equivalent to a full time research assistant for 20k a month. Which is way more expensive than an actual research assistant, but that’s not stopping them from making the pitch.

Part of it is we keep realizing AGI is a lot more broader and more complex than we think

I think most people agree, including the investors pouring billions into this.

The same investors that poured (and are still pouring) billions into crypto, and invested in sub-prime loans and valued pets.com at $300M? I don’t see any way the companies will be able to recoup the costs of their investment in “AI” datacenters (i.e. the $500B Stargate or $80B Microsoft; probably upwards of a trillion dollars globally invested in these data-centers).

The bigger loss is the ENORMOUS amounts of energy required to train these models. Training an AI can use up more than half the entire output of the average nuclear plant.

AI data centers also generate a ton of CO². For example, training an AI produces more CO² than a 55 year old human has produced since birth.

Complete waste.

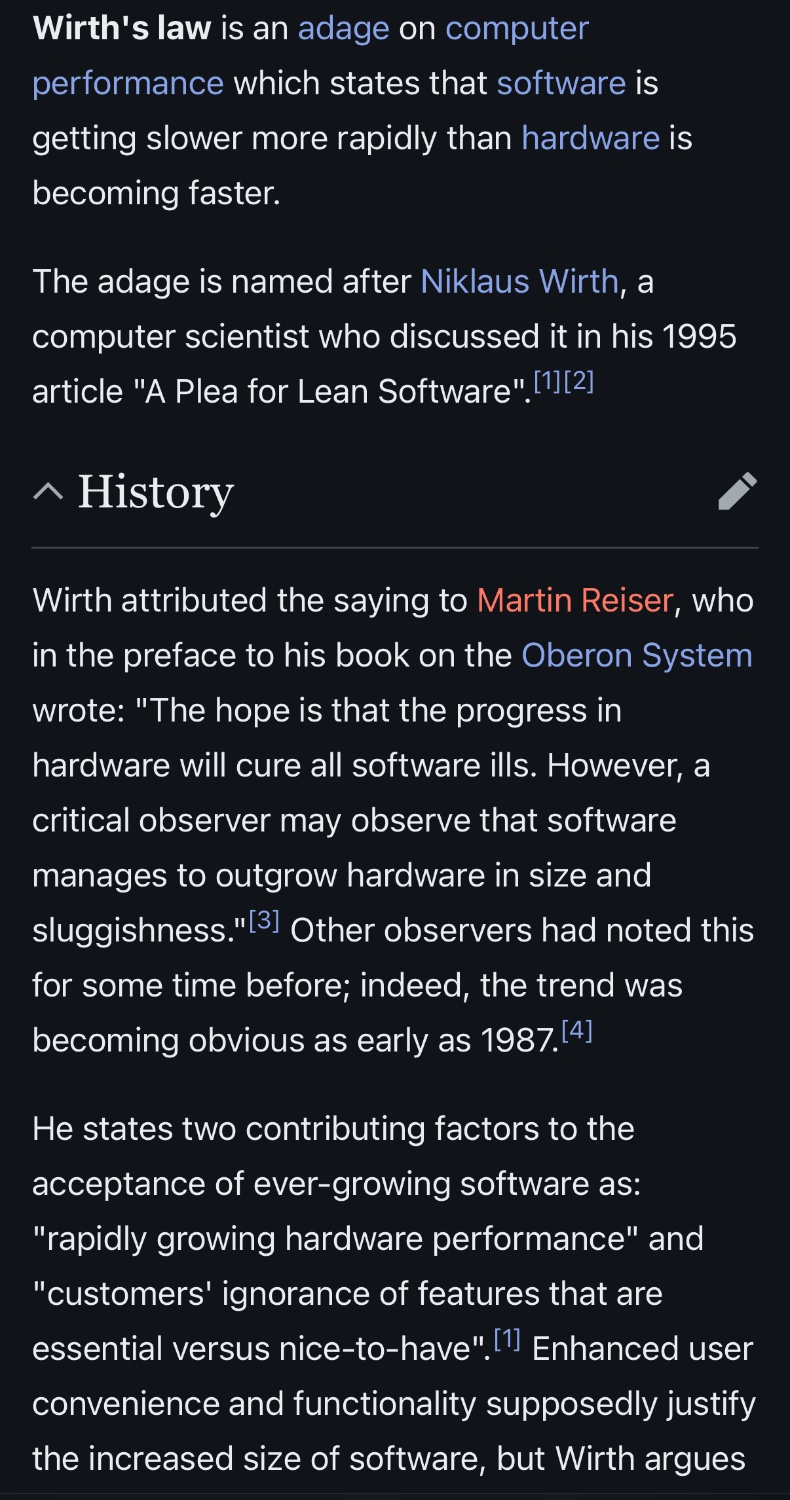

Optimizing AI performance by “scaling” is lazy and wasteful.

Reminds me of back in the early 2000s when someone would say don’t worry about performance, GHz will always go up.

don’t worry about performance, GHz will always go up

TF2 devs lol

I miss flash players.

Thing is, same as with GHz, you have to do it as much as you can until the gains get too small. You do that, then you move on to the next optimization. Like ai has and is now optimizing test time compute, token quality, and other areas.

To be fair, GHz did go up. Granted, it’s not why modern processors are faster and more efficient.

TIL

It always wins in the end though. Look up the bitter lesson.

Take a car that’s stuck in reverse, slap a 454 Chevy big block in it. You’ll have a car that still drives the wrong way; but faster.

Me and my 5.000 closest friends don’t like that the website and their 1.300 partners all need my data.

Why so many sig figs for 5 and 1.3 though?

Some parts of the world (mostly Europe, I think) use dots instead of commas for displaying thousands. For example, 5.000 is 5,000 and 1.300 is 1,300

But usually you don’t put three 000 because that becomes a hint of thousand.

Like 2.50 is 2€50 but 2.500 is 2500€

Is there an ISO standard for this stuff?

No, 2,50€ is 2€ and 50ct, 2.50€ is wrong in this system. 2,500€ is also wrong (for currency, where you only care for two digits after the comma), 2.500€ is 2500€

what if you are displaying a live bill for a service billed monthly, like bandwidth, and are charged one pence/cent/(whatever eutopes hundredth is called) per gigabyte if you use a few megabytes the bill is less than a hundredth but still exists.

Yes, that’s true, but more of an edge case. Something like gasoline is commonly priced in fractional cents, tho:

I knew the context, was just being cheesy. :-D

oh lol

Too late… You started a war in the comments. I’ll proudly fight for my country’s way to separate numbers!!! :)

Yeah, and they’re wrong.

We (in Europe) probably should be thankful that you are not using feet as thousands-separator over there in the USA… Or maybe separate after each 2nd digit, because why not… ;)

It makes sense from typographical standpoint, the comma is the larger symbol and thus harder to overlook, especially in small fonts or messy handwriting

But from a grammatical sense it’s the opposite. In a sentence, a comma is a short pause, while a period is a hard stop. That means it makes far more sense for the comma to be the thousands separator and the period to be the stop between integer and fraction.

I have no strong preference either way. I think both are valid and sensible systems, and it’s only confusing because of competing standards. I think over long enough time, due to the internet, the period as the decimal separator will prevail, but it’s gonna happen normally, it’s not something we can force. Many young people I know already use it that way here in Germany

Says the country where every science textbook is half science half conversion tables.

Not even close.

Yes, one half is conversion tables. The other half is scripture disproving Darwinism.

Yes. It’s the normal Thousands-separator notation in Germany for example.

Its not a dead end if you replace all big name search engines with this. Then slowly replace real results with your own. Then it accomplishes something.

removed by mod

I have been shouting this for years. Turing and Minsky were pretty up front about this when they dropped this line of research in like 1952, even lovelace predicted this would be bullshit back before the first computer had been built.

The fact nothing got optimized, and it still didn’t collapse, after deepseek? kind of gave the whole game away. there’s something else going on here. this isn’t about the technology, because there is no meaningful technology here.

I have been called a killjoy luddite by reddit-brained morons almost every time.

Why didn’t you drop the quotes from Turing, Minsky, and Lovelace?

because finding the specific stuff they said, which was in lovelace’s case very broad/vague, and in turing+minsky’s cases, far too technical for anyone with sam altman’s dick in their mouth to understand, sounds like actual work. if you’re genuinely curious, you can look up what they had to say. if you’re just here to argue for this shit, you’re not worth the effort.